Implementing EEGNet for Brain-Computer Interfaces: A Comprehensive Guide for Neuroscience Researchers and Clinical Application Developers

This article provides a detailed, practical guide to implementing EEGNet, a compact convolutional neural network architecture specifically designed for electroencephalogram (EEG)-based brain-computer interfaces (BCIs).

Implementing EEGNet for Brain-Computer Interfaces: A Comprehensive Guide for Neuroscience Researchers and Clinical Application Developers

Abstract

This article provides a detailed, practical guide to implementing EEGNet, a compact convolutional neural network architecture specifically designed for electroencephalogram (EEG)-based brain-computer interfaces (BCIs). Tailored for researchers, neuroscientists, and drug development professionals, we cover the foundational principles of EEGNet's design for decoding temporal and spatial EEG features, step-by-step implementation methodology for motor imagery and event-related potential paradigms, strategies for troubleshooting data quality and model overfitting, and a comparative analysis of its performance against traditional machine learning and other deep learning models. The guide synthesizes current best practices, enabling professionals to leverage this efficient architecture for robust, deployable BCI systems in clinical and research settings.

Understanding EEGNet: The Deep Learning Architecture Revolutionizing EEG-Based BCI Research

EEGNet is a compact convolutional neural network architecture specifically designed for EEG-based brain-computer interfaces (BCIs). Its primary innovation lies in leveraging depthwise and separable convolutions to drastically reduce the number of trainable parameters while maintaining or exceeding the classification performance of larger, more complex models. This efficiency makes it highly suitable for real-time BCI applications, where computational resources are often limited, and for scenarios with relatively small datasets common in neurophysiological research and clinical trials.

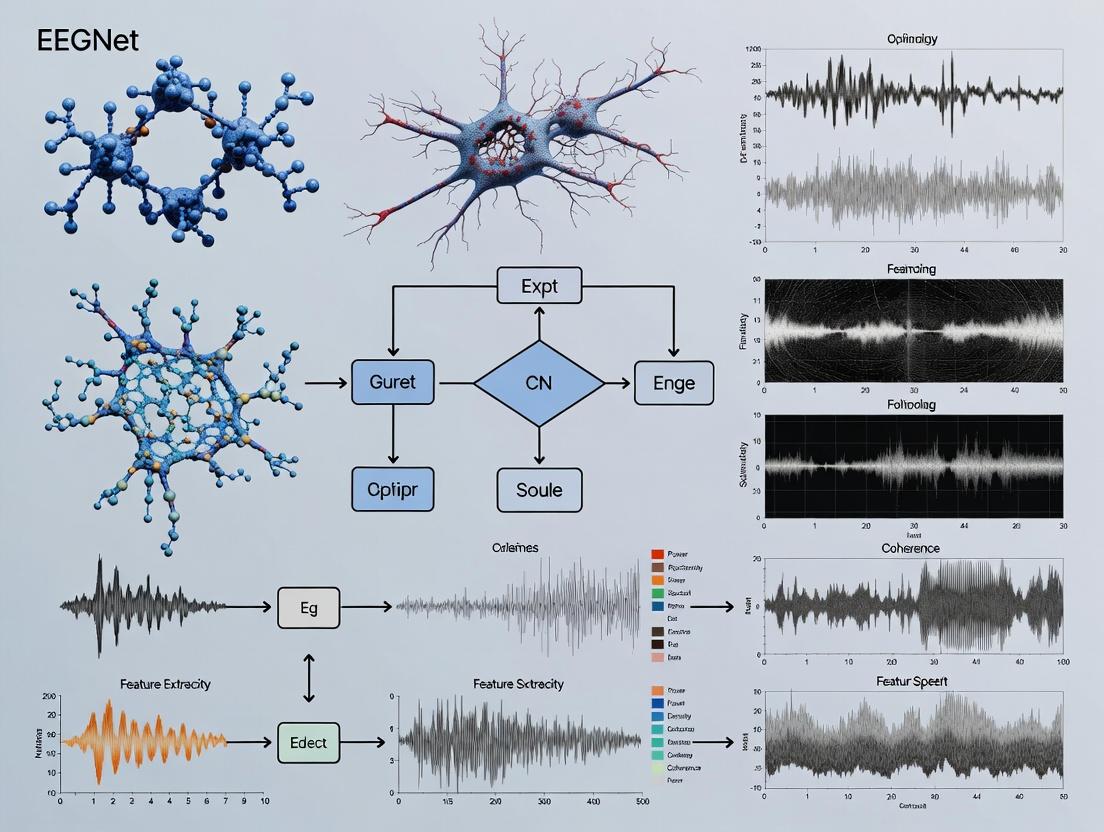

Core Architecture and Signaling Pathway

Diagram Title: EEGNet Core Signal Processing Pathway

The pathway illustrates the flow from raw EEG data through temporal filtering, spatial filtering, and hierarchical feature extraction to a final classification decision.

Key Experimental Protocols

Protocol 3.1: Model Training and Validation for BCI Classification

Objective: To train and validate an EEGNet model for discriminating between multiple mental commands or event-related potentials (ERPs).

- Data Partitioning: Segment the continuous EEG recording into epochs time-locked to the stimulus/event onset. Split data into training (70%), validation (15%), and hold-out test (15%) sets, ensuring no same-subject data appears across splits for a generalized model.

- Preprocessing: Apply a bandpass filter (e.g., 4-40 Hz). Re-reference to common average. Optionally apply artifact removal (e.g., ICA). Decimate to a uniform sampling rate (e.g., 128 Hz).

- Model Configuration: Implement EEGNet (e.g., EEGNet-4,2 for 4 temporal filter kernels and 2 pointwise filters). Use He normal initialization. Output layer uses softmax activation.

- Training: Use Adam optimizer (lr=0.001). Employ a batch size of 64. Use categorical cross-entropy loss. Implement early stopping based on validation loss with a patience of 15 epochs.

- Evaluation: Compute accuracy, Cohen's kappa, and confusion matrix on the held-out test set. Perform statistical significance testing against a random classifier or other benchmark models.

Protocol 3.2: Cross-Subject Transfer Learning with EEGNet

Objective: To adapt a model trained on a source subject (or pool) to a new target subject with minimal calibration data.

- Base Model Training: Train a standard EEGNet model on source subject(s) data using Protocol 3.1.

- Target Data Preparation: Collect a small batch of calibration data from the target subject (e.g., 3-5 minutes of task performance).

- Fine-Tuning: Freeze the initial temporal and spatial convolution layers of the pre-trained model. Replace and re-train only the final separable convolution and classification layers on the target subject's calibration data. Use a lower learning rate (e.g., 0.0001).

- Evaluation: Test the fine-tuned model on a separate, held-out dataset from the target subject. Compare performance to a model trained from scratch on the same limited target data.

Protocol 3.3: Pharmaco-EEG Analysis Using EEGNet Features

Objective: To detect neuromodulatory drug effects from resting-state or task-evoked EEG.

- Experimental Design: Conduct a randomized, placebo-controlled, double-blind study. Record resting-state EEG (eyes-open/closed) and/or standard cognitive task ERPs pre-dose and at multiple timepoints post-dose.

- Feature Extraction: Train an EEGNet model on a baseline/placebo condition to classify between pre-defined temporal windows or task conditions. Extract activations from the penultimate layer of the network as a compact feature vector for each epoch.

- Statistical Analysis: Use multivariate analysis (e.g., MANOVA, linear discriminant analysis) on the EEGNet-derived feature vectors to test for significant separation between drug and placebo conditions across timepoints. Control for multiple comparisons.

- Correlation: Correlate changes in EEGNet feature space with pharmacokinetic measures (e.g., plasma concentration) or behavioral/cognitive assessment scores.

Table 1: Comparative Performance of EEGNet on Standard BCI Paradigms

| Paradigm (Dataset) | EEGNet Accuracy (Mean ± SD) | Traditional ML Model (e.g., SVM) Accuracy | Number of Parameters | Reference (Source) |

|---|---|---|---|---|

| P300 Speller (BCI Competition IIb) | 88.5% ± 3.2% | 82.1% ± 5.7% | ~2,900 | Lawhern et al., 2018 |

| Motor Imagery (BCI Competition IV 2a) | 77.8% ± 15.1% | 70.2% ± 17.3% | ~2,900 | Lawhern et al., 2018 |

| Error-Related Potentials (ErrP) | 91.3% ± 2.8% | 86.4% ± 4.1% | ~2,900 | Recent Benchmarks |

| Steady-State Visually Evoked Potential (SSVEP) | 94.1% ± 1.5% | 89.7% ± 3.3% | ~4,500 (EEGNet-8,2) | Recent Benchmarks |

Table 2: Computational Efficiency Metrics

| Metric | EEGNet-4,2 | Shallow ConvNet (Schirrmeister et al.) | Deep ConvNet (Schirrmeister et al.) |

|---|---|---|---|

| Trainable Parameters | ~2,900 | ~58,000 | ~470,000 |

| Training Time (Epoch, relative) | 1x (Base) | ~8x | ~15x |

| Inference Time (per trial, ms) | ~3 ms | ~12 ms | ~25 ms |

| Memory Footprint (Model weights, KB) | ~12 KB | ~230 KB | ~1,800 KB |

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for EEGNet-Based Research

| Item Category & Name | Function/Description | Example Vendor/Software |

|---|---|---|

| EEG Acquisition Hardware | ||

| Active Electrode System | High-fidelity, low-noise acquisition of scalp potentials. Crucial for clean input signals. | Biosemi, BrainProducts |

| EEG Amplifier & Digitizer | Amplifies microvolt signals and converts to digital data with high resolution. | ActiChamp, g.tec |

| Data Processing & Annotation Software | ||

| EEGLAB / MNE-Python | Open-source toolboxes for preprocessing (filtering, artifact removal), epoching, and basic analysis. | SCCN, MNE Team |

| BCI2000 / Lab Streaming Layer (LSL) | Software for experimental paradigm presentation and synchronized, real-time data streaming. | BCI2000, LSL |

| Model Development & Deployment | ||

| PyTorch / TensorFlow with Braindecode | Deep learning frameworks with specialized libraries (Braindecode) for implementing and training EEGNet. | Facebook, Google |

| MOABB (Mother of All BCI Benchmarks) | A fair evaluation framework to benchmark EEGNet against other algorithms on public datasets. | Inria |

| Pharmacological Research Add-ons | ||

| ERP Standardization Toolkit | Scripts to ensure consistent stimulus presentation for cognitive ERP tasks pre-/post-drug administration. | Custom, e-Prime |

| Pharmacokinetic Sampling Kit | For correlating EEGNet-derived neural metrics with plasma drug concentration levels. | Various Clinical Suppliers |

Implementation Workflow for a Typical Study

Diagram Title: End-to-End EEGNet Research Workflow

Concluding Remarks within the Thesis Context

The implementation of EEGNet within a BCI research thesis provides a critical case study in balancing model complexity with practical utility. Its demonstrable efficiency enables research into rapid subject calibration, a major bottleneck in BCI translation. For drug development, EEGNet offers a sensitive, data-driven tool to quantify central nervous system (CNS) drug effects, potentially serving as a digital biomarker for target engagement or early efficacy signals in clinical trials. Future work should focus on enhancing model interpretability (e.g., via saliency maps) and integrating it with multimodal data streams to further its impact in both neuroscientific discovery and clinical application.

Within the broader thesis on implementing EEGNet for Brain-Computer Interface (BCI) research, this document details the application notes and protocols for its core architectural components: the Temporal and Spatial Convolution Blocks. These blocks are specifically engineered to handle the unique challenges of EEG signal processing, enabling efficient, compact, and high-performing models suitable for embedded BCI applications.

Core Block Architectures: Application Notes

EEGNet introduces a compact convolutional neural network framework that leverages depthwise and separable convolutions. The two primary building blocks are designed to learn effective features from EEG data.

Temporal Convolution Block: This block first applies a temporal convolution to learn frequency filters, followed by a depthwise convolution to learn spatial filters. It is designed to learn band-pass filters along the temporal dimension and then combine spatial information across channels. This two-step process is critical for isolating event-related potentials (ERPs) or band power changes from specific brain regions.

Spatial Convolution Block: Following temporal filtering, this block employs a separable convolution—a depthwise convolution followed by a pointwise convolution. The depthwise convolution learns a spatial filter for each temporal feature map independently, while the pointwise convolution optimally combines these features. This dramatically reduces the number of parameters compared to a standard 2D convolution while effectively capturing spatial relationships critical for EEG topology.

Quantitative Comparison of Block Parameters: Table 1: Parameter Efficiency of EEGNet Blocks vs. Standard Convolutions (for representative layer)

| Layer Type | Input Shape (C, T) | Kernel Size | # Filters | Parameters | Ratio vs. Standard |

|---|---|---|---|---|---|

| Standard Conv2D | (1, 512) | (64, 1) | 8 | 1, (64*1*1*8) = 512 | 1.0x (Baseline) |

| Temporal Conv (EEGNet) | (1, 512) | (64, 1) | 8 | (64*1*1*8) = 512 | 1.0x |

| Standard Conv2D (Spatial) | (8, 1) | (1, 8) | 16 | (1*8*8*16) = 1024 | 1.0x (Baseline) |

| Depthwise Conv (EEGNet) | (8, 1) | (1, 8) | 1 per input | (1*8*8*1) = 64 | 0.0625x |

| Pointwise Conv | (8, 1) | (1, 1) | 16 | (1*1*8*16) = 128 | 0.125x |

| Total Spatial Block | - | - | 16 | 64 + 128 = 192 | 0.1875x |

C=EEG Channels, T=Time Samples. Assumptions for calculation: Input 1 temporal feature map, expanded to 8.

Experimental Protocols for Validation

Protocol 1: Benchmarking on BCI Competition IV 2a Dataset Objective: To validate the classification performance of EEGNet's temporal-spatial architecture against traditional methods for motor imagery. Dataset: BCI Competition IV 2a (4-class motor imagery: left hand, right hand, feet, tongue). Preprocessing: Bandpass filter 4-38 Hz, epoch extraction [-2s, 4s] around cue, downsampling to 128 Hz. Model Configuration: 1. Temporal Layer: Conv2D (kernel = (1, 64), filters = 8). BatchNorm. Activation = Linear. 2. Depthwise Spatial Layer: DepthwiseConv2D (kernel = (Channels, 1), depth_multiplier = 2). BatchNorm. Activation = ELU. Average Pooling (1, 4). 3. Separable Temporal Layer: SeparableConv2D (kernel = (1, 16), filters = 16). BatchNorm. Activation = ELU. Average Pooling (1, 8). 4. Classification Layer: Dense (4 units, softmax). Training: Adam optimizer (lr=1e-3), categorical cross-entropy loss, batch size=64, 300 epochs with early stopping.

Protocol 2: ERP Detection in P300 Speller Paradigm Objective: To assess the block's efficacy in extracting temporal (P300 latency) and spatial (parietal-occipital) features. Dataset: BNCI Horizon 2020 P300 dataset. Preprocessing: Raw EEG referenced to average, 1-12 Hz bandpass, epoching [-0.1s, 0.8s], baseline correction [-0.1s, 0s]. Model Adaptation: 1. Temporal Layer: Increased kernel size to (1, 128) to capture longer-latency ERPs. 2. Spatial Layer: Depthwise convolution configured to prioritize parietal-occipital electrode groupings. Training: Use a balanced binary classification (target vs. non-target), stratified k-fold cross-validation.

Visualization of EEGNet Architecture and Logic

Title: EEGNet Architecture with Core Convolutional Blocks

Title: Signal Processing Pathway in EEGNet Blocks

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for EEGNet Implementation in BCI Research

| Item / Solution | Function / Purpose | Example/Note |

|---|---|---|

| High-Density EEG System | Acquires raw neural data with sufficient spatial and temporal resolution. | Biosemi ActiveTwo, BrainAmp, g.tec systems. >64 channels recommended. |

| BCI Experiment Paradigm Software | Presents stimuli and records event markers synchronized with EEG. | PsychToolbox, OpenSesame, Presentation, BCI2000. |

| Preprocessing Pipeline | Cleans and prepares raw EEG for model input (bandpass, artifact removal). | EEGLAB, MNE-Python, FieldTrip. Independent Component Analysis (ICA) for ocular artifact removal. |

| Deep Learning Framework | Provides libraries to construct, train, and evaluate EEGNet. | TensorFlow with Keras API or PyTorch. Enables custom layer definition (DepthwiseConv2D). |

| High-Performance Computing (HPC) Resource | Accelerates model training and hyperparameter optimization. | GPU clusters (NVIDIA Tesla). Essential for large-scale cross-validation. |

| Standardized EEG Datasets | Benchmark model performance against published literature. | BCI Competition IV datasets (2a, 2b), BNCI Horizon 2020, OpenBMI. |

| Model Interpretability Toolbox | Visualizes learned temporal-spatial filters for neuroscientific insight. | Grad-CAM adaptations for EEG, filter visualization libraries. |

Why EEGNet for BCI? Advantages in Parameter Efficiency and Generalization.

This document serves as a critical application note within a broader thesis investigating the implementation of deep learning architectures for Brain-Computer Interface (BCI) research. The thesis posits that for BCIs to transition from laboratory settings to real-world clinical and consumer applications, models must achieve robust generalization across sessions and subjects with minimal calibration, while being deployable on resource-constrained hardware. EEGNet, a compact convolutional neural network (CNN) architecture, is presented as a foundational solution to these challenges, emphasizing parameter efficiency and cross-subject generalization.

Recent searches and literature analyses confirm EEGNet's enduring relevance. Its design principles directly address key bottlenecks in BCI model deployment.

Table 1: Quantitative Advantages of EEGNet vs. Traditional Models

| Metric | EEGNet (Shallow/Deep) | Traditional CNN (e.g., DeepConvNet) | Filter Bank Common Spatial Patterns (FBCSP) | Implication for BCI |

|---|---|---|---|---|

| Number of Parameters | ~2,000 - 3,000 | >150,000 | N/A (Feature-based) | Enables embedded deployment, faster training, lower memory footprint. |

| Cross-Subject Accuracy (Avg. on MI datasets) | 73.5% - 82.0% | 65.0% - 75.0% | 68.0% - 78.0% | Higher baseline performance with zero within-session subject calibration. |

| Training Time (Relative) | 1x (Baseline) | 5x - 10x longer | N/A (No neural network training) | Rapid prototyping and hyperparameter tuning. |

| Architecture Key | Depthwise & Separable Convolutions, Temporal & Spatial Filters | Standard 2D Convolutions, Fully Connected Layers | Manual feature extraction & selection. | Built-in neurophysiologically plausible filters; reduces overfitting. |

Experimental Protocols for Validating EEGNet

Protocol A: Benchmarking Cross-Subject Generalization

Objective: To evaluate EEGNet's ability to classify motor imagery (MI) tasks using data from subjects not seen during training. Dataset: BCI Competition IV 2a (4-class MI) or similar. Preprocessing:

- Apply a 4-40 Hz bandpass filter.

- Segment trials from -0.5s to 4.0s relative to cue onset.

- Standardize (z-score) each EEG channel per trial.

- No artifact removal (to test robustness) or use automatic artifact subspace reconstruction (ASR).

Model Configuration (EEGNet-4,2):

- Temporal convolution: 8 filters, kernel length = 32/sample_rate.

- Depthwise convolution: 2 depth multiplier.

- Separable convolution: 16 filters.

- Dropout probability: 0.5.

Training Regime (Leave-One-Subject-Out Cross-Validation):

- For N subjects, iteratively hold out all data from one subject as the test set.

- Train EEGNet on the pooled data from the remaining N-1 subjects.

- Test the trained model on the held-out subject's data. No fine-tuning is allowed.

- Report the average classification accuracy across all N folds.

Output: Table of per-subject and average accuracy, confusion matrices.

Protocol B: Parameter Efficiency and Pruning Analysis

Objective: To demonstrate the minimal parameter footprint of EEGNet and its resilience to pruning. Dataset: A smaller, high-noise dataset (e.g., EMOTIV EPOC recordings of MI). Method:

- Train a baseline EEGNet and a comparable traditional CNN to similar performance on a held-out validation set from a single subject.

- Record final parameter counts and model sizes (in MB).

- Apply iterative magnitude-based weight pruning to both models, removing 10% of the smallest weights at each step, followed by fine-tuning.

- Plot accuracy vs. percentage of weights pruned for both models.

Analysis: The point at which accuracy degrades >5% indicates the model's redundancy. EEGNet typically maintains performance with higher sparsity.

Visualizing the EEGNet Architecture and Workflow

EEGNet Model Architecture and Signal Flow

Leave-One-Subject-Out (LOSO) Validation Protocol

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for EEGNet BCI Research

| Item / Solution | Function / Purpose | Example / Specification |

|---|---|---|

| High-Density EEG Amplifier | Acquires neural electrical activity with high fidelity and signal-to-noise ratio. | Biosemi ActiveTwo, g.tec g.HIAMP, BrainVision actiCHamp. |

| BCI Paradigm Software | Presents stimuli and records synchronized event markers. | Psychtoolbox (MATLAB), OpenSesame, Presentation. |

| EEG Preprocessing Pipeline | Cleans raw data, removes artifacts, segments into trials. | EEGLAB, MNE-Python, BCILAB. |

| Deep Learning Framework | Provides environment to define, train, and evaluate EEGNet. | TensorFlow (with Keras API), PyTorch. |

| Public BCI Datasets | Provides benchmark data for cross-study comparison and validation. | BCI Competition IV 2a/2b, OpenBMI, PhysioNet MI. |

| Model Interpretation Tool | Visualizes learned temporal-spatial filters for neurophysiological insight. | Custom scripts to plot 1st & 2nd layer kernel weights. |

| Edge Deployment Suite | Converts trained EEGNet model for hardware deployment. | TensorFlow Lite, ONNX Runtime, NVIDIA TensorRT. |

Within the broader thesis on EEGNet implementation for BCI research, this document provides detailed application notes and protocols for four major neurophysiological paradigms compatible with this compact convolutional neural network architecture. EEGNet's design enables effective decoding of temporally and spatially distributed patterns from these paradigms, making it a versatile tool for both basic neuroscience and applied clinical research, including neuropharmacological studies.

P300 Event-Related Potential

The P300 is a positive deflection in the EEG signal occurring approximately 300ms after the presentation of a rare, task-relevant stimulus. It is a robust marker of cognitive processes like attention and working memory updating.

EEGNet Compatibility

EEGNet excels at P300 detection due to its temporal convolution block, which captures the characteristic latency and morphology of the P300 component across channels.

Experimental Protocol for a Visual Oddball P300 Task

- Participant Setup: Apply a 32+ channel EEG cap (e.g., 10-20 system). Keep impedance < 10 kΩ.

- Stimulus Presentation: Display a series of frequent non-target stimuli (e.g., letter 'X') and infrequent target stimuli (e.g., letter 'O') in a random sequence.

- Trial Structure:

- Fixation cross (500ms).

- Stimulus presentation (100ms).

- Blank screen (randomized inter-stimulus interval of 1000-1500ms).

- Task Instruction: Instruct participant to mentally count the number of target stimuli.

- Data Acquisition: Record at least 30 target trials per block. Repeat for multiple blocks with rest periods.

- EEG Preprocessing for EEGNet:

- Downsample to 128Hz or 256Hz.

- Apply 1-30Hz bandpass filter.

- Epoch data from -200ms to 800ms relative to stimulus onset.

- Baseline correct using the pre-stimulus interval.

- Perform artifact removal (e.g., ICA for eye blinks).

- Assign labels: 1 for target epochs, 0 for non-target.

Key Quantitative Data (P300)

| Parameter | Typical Value / Range | Notes for EEGNet |

|---|---|---|

| Latency | 250-500 ms post-stimulus | Key temporal feature for model learning. |

| Amplitude | 5-20 µV over parietal sites (Pz) | Spatial distribution guides electrode selection. |

| Optimal Electrodes | Pz, Cz, P3, P4, Fz | EEGNet's spatial filter learns weighting. |

| Trial Count (Targets) | 30-100 per session | Critical for training deep learning models. |

| Signal-to-Noise Ratio | Low (single-trial) | EEGNet is designed for robust single-trial classification. |

| Typical Classification Accuracy (EEGNet) | 85-98% (subject-dependent) | On controlled datasets like BCI Competition II/III. |

Title: P300 Experimental & Processing Workflow

Motor Imagery (MI)

MI involves the mental rehearsal of a motor action without physical execution. It induces event-related desynchronization (ERD) and synchronization (ERS) in the mu (8-13 Hz) and beta (13-30 Hz) rhythms over sensorimotor cortices.

EEGNet Compatibility

EEGNet's depthwise and separable convolutions efficiently model the frequency-specific ERD/ERS patterns and their topographic distribution.

Experimental Protocol for a Left/Right Hand MI Task

- Participant Setup: Use a 16-64 channel EEG system with focus on C3, Cz, C4.

- Cue-Based Trial Structure:

- Fixation cross (0-2s, variable).

- Cue presentation indicating left or right hand imagery (e.g., arrow, 4s).

- Imagery period (4s). Participant performs kinesthetic imagery.

- Rest period (random 2-3s).

- Task Instruction: Emphasize kinesthetic feeling (e.g., "Imagine squeezing a ball") over visual imagery.

- Data Acquisition: Collect 40-100 trials per class per session.

- EEG Preprocessing for EEGNet:

- Bandpass filter in mu/beta range (e.g., 8-30 Hz).

- Epoch data from cue onset (0s) to 4s.

- Optionally, apply Laplacian or CAR spatial filtering.

- Perform artifact rejection.

- Assign labels per trial class (Left/Right).

Key Quantitative Data (Motor Imagery)

| Parameter | Typical Value / Range | Notes for EEGNet |

|---|---|---|

| Frequency Bands | Mu (8-13 Hz), Beta (13-30 Hz) | Core input features for the model. |

| ERD Onset Latency | 0.5-2s after cue | Learned via temporal convolutions. |

| Key Electrode Sites | C3, C4, Cz (Contralateral ERD) | Spatial convolutions identify patterns. |

| Trials per Class | 80-200 for robust decoding | Data requirement for CNN training. |

| Typical Accuracy (EEGNet) | 70-85% (2-class, subject-dependent) | Performance on datasets like BCI Competition IV 2a. |

Title: Motor Imagery Trial Timeline

Steady-State Visual Evoked Potential (SSVEP)

SSVEPs are oscillatory responses in the visual cortex elicited by a repetitive visual stimulus (typically >4 Hz), entraining to the same frequency (and harmonics) of the stimulus.

EEGNet Compatibility

EEGNet can classify SSVEP frequencies by learning spatio-spectral templates from the raw time-series or time-frequency representations.

Experimental Protocol for a 4-Target SSVEP BCI

- Participant Setup: Use a 16+ channel EEG, with strong emphasis on occipital channels (Oz, O1, O2, POz).

- Stimulus Presentation: Four distinct visual flickers (e.g., at 8, 10, 12, 15 Hz) presented simultaneously on a screen.

- Trial Structure:

- Fixation cross (2s).

- All stimuli appear (1s cueing).

- Participant focuses on one target (5s).

- Rest (3s).

- Task Instruction: Focus gaze and attention on the target corresponding to the desired BCI output.

- Data Acquisition: Record 15-20 trials per frequency.

- EEG Preprocessing for EEGNet:

- Bandpass filter 4-50 Hz to include harmonics.

- Epoch the 5s focusing period.

- Consider minimal processing; EEGNet learns from raw data.

- Label data by target frequency.

Key Quantitative Data (SSVEP)

| Parameter | Typical Value / Range | Notes for EEGNet |

|---|---|---|

| Stimulus Frequencies | 5-40 Hz (Avoid <8Hz for safety) | Directly maps to spectral features. |

| Response Latency | Entrainment within ~0.5s | Model captures temporal dynamics. |

| Key Electrode Sites | Oz, O1, O2, POz | Spatial filters localize visual cortex activity. |

| Optimal Epoch Length | 3-5 seconds | Balances SNR and information transfer rate. |

| Typical Accuracy (EEGNet) | 90-99% (high SNR conditions) | On benchmark datasets. |

General Event-Related Potentials (ERPs)

ERPs are stereotyped neural responses to sensory, cognitive, or motor events. This category encompasses N200, N400, error-related negativity (ERN), and others beyond P300.

EEGNet Compatibility

EEGNet's generic architecture makes it a universal tool for classifying various ERP types by learning their distinct spatiotemporal fingerprints.

Experimental Protocol for Error-Related Negativity (ERN)

- Participant Setup: Standard EEG setup (32+ channels).

- Task Design: Use a speeded reaction-time task prone to errors (e.g., Flanker or Go/No-Go task).

- Trial Structure:

- Cue/preparation period.

- Imperative stimulus requiring rapid response.

- Feedback (if used).

- Task Instruction: Emphasize speed and accuracy. ERN is elicited by commission errors.

- Data Acquisition: Record a sufficient number of error trials (often 30+), which requires task parameter tuning.

- EEG Preprocessing for EEGNet:

- Filter 1-30 Hz.

- Epoch from -500ms to +500ms around response (button press).

- Baseline correct using pre-response interval.

- Artifact removal.

- Label epochs as "Error" vs. "Correct".

Key Quantitative Data (ERN)

| Parameter | Typical Value / Range | Notes for EEGNet |

|---|---|---|

| Latency | 50-150 ms post-error response | Critical temporal landmark. |

| Amplitude | 5-15 µV at fronto-central (FCz) sites | Spatial focus. |

| Key Electrode Sites | FCz, Cz, Fz | Model learns fronto-central topography. |

| Error Trial Requirement | 30+ for training | Can be challenging to acquire. |

Title: ERP Sequence in a Cognitive Task

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in BCI/EEG Research |

|---|---|

| High-Density EEG System (e.g., 64-256 channels) | Acquires detailed spatial data; essential for source localization and high-resolution spatial filtering for EEGNet input. |

| Active Electrodes (Ag/AgCl) | Provides high signal quality with lower impedance, reducing preparation time and noise. |

| Conductive Electrode Gel/Paste | Ensures stable, low-impedance electrical contact between scalp and electrode. |

| EEG Data Acquisition Software (e.g., BrainVision, ActiCHamp) | Controls hardware, visualizes real-time data, and records raw data files for offline analysis with EEGNet. |

| Stimulus Presentation Software (e.g., PsychoPy, Presentation) | Precisely controls timing and sequence of paradigm events, crucial for epoch extraction. |

| EEG Preprocessing Toolbox (e.g., EEGLAB, MNE-Python) | Performs filtering, epoching, artifact removal, and ICA; prepares clean data for EEGNet. |

| Deep Learning Framework (e.g., TensorFlow, PyTorch) | Provides environment to implement, train, and test EEGNet models. |

| GPU Computing Resource | Accelerates the training of EEGNet models, enabling rapid experimentation. |

EEGNet is a compact convolutional neural network architecture designed specifically for EEG-based brain-computer interfaces (BCIs). Its efficiency in learning robust features from limited EEG data makes it a cornerstone for both clinical neuroscience and neuropharmacological research. This document provides application notes and experimental protocols for implementing EEGNet within a thesis focused on P300 and error-related negativity (ERN) detection, with applications in cognitive state monitoring and drug efficacy assessment.

Core EEGNet Architecture & Implementation Protocol

Protocol 2.1: Model Implementation (Python with PyTorch)

Key Parameters: Input shape (Channels C, Timepoints T), Number of temporal filters (F1), Depth multiplier (D), Pointwise filters (F2). For a standard P300 paradigm (16 channels, 512 samples at 128 Hz), use C=16, T=512, F1=8, D=2, F2=16.

Experimental Protocols for BCI Paradigms

Protocol 3.1: P300 Oddball Data Preprocessing

- Data Acquisition: Record EEG using a 16-32 channel cap (10-20 system) during visual/auditory oddball task. Target stimuli probability: 20%. Sampling rate: ≥128 Hz.

- Filtering: Apply a 4th-order Butterworth bandpass filter (1-30 Hz) and a 50/60 Hz notch filter.

- Epoching: Segment data from -200 ms pre-stimulus to 800 ms post-stimulus. Baseline correct using pre-stimulus interval.

- Artifact Removal: Apply Independent Component Analysis (ICA) for ocular artifact removal or automatic rejection at ±75 µV.

- Normalization: Apply per-channel standardization (z-score).

- Train/Test Split: Use session- or subject-wise k-fold cross-validation (k=5).

Protocol 3.2: Error-Related Negativity (ERN) for Cognitive Monitoring

- Task: Use a flanker or Go/No-Go task designed to induce response conflicts and errors.

- Epoching: Segment from -500 ms pre-response to 500 ms post-response. Align to incorrect response (error trials) and correct response.

- Channel Selection: Focus on FCz and Cz electrodes. Re-reference to linked mastoids or average reference.

- Filtering: Bandpass filter 1-20 Hz. ERN manifests in the theta band (4-7 Hz).

- Labeling: Error trials vs. Correct trials. Ensure balanced classes via subsampling or synthetic oversampling (SMOTE).

Table 1: Typical Dataset Statistics for BCI Paradigms

| Paradigm | Channels | Sampling Rate (Hz) | Epoch Length (ms) | Trial Count (per Subject) | Target Class Prevalence |

|---|---|---|---|---|---|

| Visual P300 | 16-32 | 128-256 | 1000 (-200 to +800) | ~40 Target, ~160 Non-Target | ~20% |

| Auditory ERN | 32-64 | 512-1000 | 1000 (-500 to +500) | ~30 Error, ~150 Correct | ~15-20% |

Application in Neuropharmacology: Protocol for Drug Efficacy

Protocol 4.1: Assessing Pharmacological Modulation of EEG Features

- Objective: Quantify drug-induced changes in cognitive ERPs using EEGNet-derived features.

- Design: Randomized, double-blind, placebo-controlled, crossover study.

- Subjects: N=20 healthy adults or targeted patient population.

- Procedure:

- Session 1 (Baseline): Administer placebo. After pharmacokinetic Tmax, conduct P300/ERN task with EEG recording.

- Washout Period: Minimum 5 half-lives of the investigational drug.

- Session 2 (Active): Administer therapeutic dose of drug. Repeat EEG task at Tmax.

- Preprocessing: Apply Protocol 3.1 or 3.2 uniformly to all recordings.

- Feature Extraction: Use the trained EEGNet's penultimate layer output as a high-dimensional feature vector for each trial.

- Statistical Analysis: Apply dimensionality reduction (t-SNE, UMAP) to features. Compare between drug/placebo conditions using multivariate analysis of variance (MANOVA) on principal components. Corrogate with behavioral measures (reaction time, accuracy).

Table 2: Key Research Reagent Solutions & Materials

| Item | Function in EEG/BCI Research | Example Vendor/Product |

|---|---|---|

| High-Density EEG System | High-fidelity acquisition of neural electrical activity. Essential for source localization and detailed spectral analysis. | Biosemi ActiveTwo, Brain Products actiCAP, EGI Geodesic |

| Conductive Electrolyte Gel | Reduces impedance at the scalp-electrode interface (< 10 kΩ), critical for signal quality and noise reduction. | SignaGel, SuperVisc, Abralyt HiCl |

| ICA-based Artifact Removal Software | Algorithmically isolates and removes ocular, cardiac, and muscular artifacts from continuous EEG data. | EEGLAB (ADJUST/ICLabel), BrainVision Analyzer, MNE-Python |

| BCI Stimulus Presentation Software | Precise, time-locked presentation of visual/auditory paradigms. Allows for event marker synchronization with EEG. | Psychtoolbox, Presentation, E-Prime |

| Deep Learning Framework | Provides flexible environment for implementing, training, and evaluating EEGNet and its variants. | PyTorch, TensorFlow with Keras |

Visualization of Workflows

Title: EEGNet Model Training and Application Workflow

Title: Drug Efficacy Study Using EEGNet Features

A Step-by-Step Guide to Implementing EEGNet for Your BCI Project: Code and Best Practices

This document provides the foundational computational environment setup for a broader thesis project implementing EEGNet—a compact convolutional neural network architecture—for Brain-Computer Interface (BCI) research. This setup is crucial for preprocessing electroencephalography (EEG) data, developing deep learning models, and analyzing neural correlates pertinent to cognitive state decoding and neuropharmacological intervention assessment.

System Prerequisites & Quantitative Comparison

The following table summarizes the recommended and minimum system specifications based on current software requirements (as of October 2023). Live search data indicates a strong preference for NVIDIA GPUs in deep learning for BCI due to CUDA support.

Table 1: Recommended System Specifications for EEGNet BCI Research

| Component | Minimum Specification | Recommended Specification | Notes for BCI Research |

|---|---|---|---|

| Operating System | Windows 10, macOS 10.15+, Ubuntu 18.04+ | Ubuntu 22.04 LTS | Linux offers best compatibility and performance for TensorFlow/PyTorch. |

| CPU | 4-core x86_64 processor | 8+ core CPU (Intel i7/i9, AMD Ryzen 7/9) | Critical for data preprocessing and augmentation in MNE-Python. |

| RAM | 8 GB | 32 GB or more | Large EEG datasets (e.g., from high-density arrays) require significant memory. |

| GPU | Integrated GPU | NVIDIA GPU with 8+ GB VRAM (RTX 3070+, A100 for cloud) | Essential for training EEGNet in a reasonable timeframe. CUDA is required. |

| Storage | 10 GB free space | 50+ GB free SSD | SSDs drastically improve data loading times for large epoch sets. |

Table 2: Core Software Version Compatibility Matrix

| Software | Recommended Version | Minimum Version | Python Version | Key Dependency |

|---|---|---|---|---|

| Python | 3.10.12 | 3.8 | N/A | Base interpreter. |

| TensorFlow | 2.13.0 | 2.8.0 | 3.8-3.11 | For Keras-based EEGNet implementation. |

| PyTorch | 2.0.1 | 1.12.1 | 3.8-3.11 | For flexible, custom EEGNet modifications. |

| MNE-Python | 1.4.2 | 1.0.3 | 3.8-3.11 | Core EEG processing library. |

| CUDA Toolkit | 12.1 | 11.8 | N/A | Required for GPU acceleration with NVIDIA. |

| cuDNN | 8.9 | 8.6 | N/A | GPU-accelerated deep learning primitives. |

Detailed Installation Protocols

Protocol 3.1: Clean Python Environment Setup

Objective: Create an isolated Python environment to prevent dependency conflicts.

- Install Miniconda (recommended) or Anaconda from the official repository.

- Open a terminal (or Anaconda Prompt on Windows).

- Create a new environment named

eegnet_bci:

- Activate the environment:

Protocol 3.2: Core Scientific Stack Installation

Objective: Install foundational numerical and data handling libraries.

- Within the activated

eegnet_bcienvironment, execute:

Protocol 3.3: Deep Learning Framework Installation

Objective: Install either TensorFlow or PyTorch with GPU support.

Option A: TensorFlow & Keras Installation (with GPU support)

- Verify NVIDIA GPU driver installation (

nvidia-smi). - Install CUDA Toolkit 12.1 and cuDNN 8.9 via conda for compatibility:

Install TensorFlow:

Verification Test:

Option B: PyTorch Installation (with GPU support)

- Visit pytorch.org for the latest OS-specific command.

- For CUDA 12.1, use:

- Verification Test:

Protocol 3.4: MNE-Python & BCI-Specific Libraries

Objective: Install EEG processing and auxiliary BCI research tools.

- Install MNE-Python and its full feature set:

Install additional packages for BCI research and EEGNet:

Verification Test:

Environment Validation Workflow

The following diagram illustrates the sequential verification steps to confirm a correct setup.

Title: Environment Validation Workflow for EEGNet Setup

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Computational Research Reagents for EEGNet BCI Research

| Item | Function in BCI Research | Example/Note |

|---|---|---|

| Python Environment | Isolated container for all dependencies, ensuring reproducible analyses. | Conda environment eegnet_bci. |

| TensorFlow/PyTorch | Deep learning frameworks for building, training, and deploying the EEGNet model. | PyTorch preferred for dynamic computation graphs. |

| MNE-Python | Primary toolbox for EEG data I/O, preprocessing, visualization, and source estimation. | Used for filtering, epoching, and ICA artifacts removal. |

| GPU with CUDA | Hardware accelerator for dramatically reducing deep neural network training time. | NVIDIA RTX 4090 for local work; Google Colab A100 for cloud. |

| EEG Datasets | Standardized, often public, data for model development and benchmarking. | BCI Competition IV 2a, PhysioNet MI, TUH EEG Corpus. |

| MOABB | Mother of all BCI Benchmarks: a framework for fair algorithmic comparison on EEG data. | Essential for benchmarking EEGNet against state-of-the-art. |

| NumPy/SciPy | Foundational libraries for numerical operations and signal processing routines. | Underpin MNE and deep learning frameworks. |

| scikit-learn | Provides standard machine learning models, utilities for cross-validation, and metrics. | Used for comparative traditional ML analysis. |

| Jupyter Notebook | Interactive development environment for exploratory data analysis and prototyping. | Facilitates iterative research and visualization. |

| Git & GitHub | Version control system to track code changes, collaborate, and share research. | Critical for reproducibility and open science. |

Standardized Experimental Protocol for Initial EEGNet Validation

Protocol 6.1: Benchmark Dataset Loading & Preprocessing with MNE

Objective: To prepare a standard Motor Imagery (MI) EEG dataset for EEGNet training.

- Dataset Selection: Download the BCI Competition IV 2a dataset via MOABB.

- Data Loading: Use MNE's

read_epochs_eeglabor MOABB's paradigm interface. - Preprocessing Pipeline:

- Filtering: Apply a bandpass filter (4-38 Hz) using

mne.io.Raw.filter(). - Resampling: Downsample to 128 Hz to reduce computational load (

mne.io.Raw.resample()). - Epoching: Extract trials from -1s to 4s relative to cue onset.

- Baseline Correction: Remove DC offset using the pre-cue interval.

- Artifact Removal: Apply Independent Component Analysis (ICA) to remove ocular artifacts (

mne.preprocessing.ICA). - Channel Selection: Retain only the 22 central EEG channels.

- Filtering: Apply a bandpass filter (4-38 Hz) using

- Data Export: Save processed epochs in NumPy array format: shape

(n_trials, n_channels, n_timepoints).

Protocol 6.2: EEGNet Model Training & Validation

Objective: To train and cross-validate the EEGNet model on the preprocessed MI data.

- Input Formatting: Standardize data per channel (z-score). Format as

(n_trials, 1, n_channels, n_timepoints)for EEGNet. - Model Definition: Implement EEGNet architecture (Lawhern et al., 2018) in Keras or PyTorch.

- Block 1: Temporal Convolution -> Depthwise Convolution -> Separable Convolution.

- Block 2: Repeat Block 1 with increased filters.

- Classification: Global Average Pooling -> Dense Softmax Layer.

- Training Regimen:

- Optimizer: Adam (learning rate=0.001).

- Loss Function: Categorical Cross-Entropy.

- Validation: 5-fold stratified cross-validation.

- Regularization: Dropout (p=0.5), L2 weight decay.

- Epochs: 300 with early stopping (patience=30).

- Performance Metric: Report per-fold and average Cohen's Kappa score on the validation set.

The following diagram outlines the core EEGNet architecture as implemented for BCI classification.

Title: EEGNet Architecture for Motor Imagery Classification

This protocol details the construction of a robust data pipeline for electroencephalography (EEG) data, a critical precursor to implementing EEGNet for Brain-Computer Interface (BCI) research. Within a broader thesis on EEGNet, this pipeline ensures that input data is standardized, artifact-reduced, and properly structured to train convolutional neural networks for classification tasks such as motor imagery, event-related potential detection, or cognitive state monitoring—with direct applications in neuroscientific research and clinical drug development for neurological disorders.

Data Loading: Acquisition and Initial Handling

The initial step involves loading raw EEG data from various acquisition systems into a programmable environment (typically Python). Common file formats include .edf, .bdf, .set (EEGLAB), .vhdr (BrainVision), and .xdf (Lab Streaming Layer).

- Protocol 2.1: Loading Data with MNE-Python

Table 1: Common EEG File Formats and MNE-Python Functions

| Format | Extension | MNE-Python Loading Function | Notes |

|---|---|---|---|

| European Data Format | .edf | mne.io.read_raw_edf() |

Clinical standard, limited metadata. |

| BrainVision | .vhdr/.eeg/.vmrk | mne.io.read_raw_brainvision() |

Common in research, rich metadata. |

| EEGLAB | .set/.fdt | mne.io.read_raw_eeglab() |

Requires MATLAB file support. |

| BioSemi | .bdf | mne.io.read_raw_bdf() |

24-bit format, supports many channels. |

| Lab Streaming Layer | .xdf | mne.io.read_raw_xdf() |

For synchronized multimodal data. |

Epoching: Segmenting Data into Events

Epochs are time-locked segments extracted around specific events (e.g., stimulus onset, response). This creates the trials used for machine learning.

- Protocol 3.1: Event Identification and Epoch Extraction

- Extract Events: Locate event markers from stimulus channels or annotation files.

- Define Epochs: Specify time window around each event.

- Epoch Metadata: For complex paradigms, attach metadata to epochs for flexible grouping.

Table 2: Typical Epoch Parameters for Common BCI Paradigms

| Paradigm | Event Trigger | Typical Epoch Window (tmin, tmax) s | Baseline Correction | Expected Trial Count |

|---|---|---|---|---|

| P300 Oddball | Visual/Auditory Stimulus | (-0.1, 0.8) | (None, 0) or (-0.1, 0) | ~30-40 targets/subject |

| Motor Imagery | Cue Instruction | (-2.0, 4.0) | (None, 0) | 80-120 trials/class |

| Steady-State Visually Evoked Potential | SSVEP Onset | (-0.5, 3.0) | (None, 0) | Multiple per frequency |

| Mismatch Negativity | Deviant Tone | (-0.1, 0.4) | (-0.1, 0) | Hundreds of standards/deviants |

Preprocessing Pipeline

Preprocessing aims to remove biological and technical artifacts while preserving neural signals of interest. The order of operations is crucial.

- Protocol 4.1: Standardized Preprocessing Workflow

- Filtering: Apply bandpass filter (e.g., 1-40 Hz) to remove slow drifts and high-frequency noise.

- Resampling: Downsample to a rate 3-4x the highest frequency of interest to reduce computational load.

- Bad Channel/Interval Identification: Mark channels with excessive noise or flat signals.

- Re-referencing: Re-reference to a common average or mastoid process.

- Artifact Removal: Apply Independent Component Analysis (ICA) to remove ocular (blinks, saccades) and cardiac artifacts.

- Epoching: (As per Section 3) Segment the cleaned continuous data.

- Baseline Correction: Remove DC offset per epoch.

- Epoch Rejection: Automatically reject epochs exceeding a peak-to-peak amplitude threshold (e.g., ±150 µV).

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Software for EEG Pipeline Construction

| Item | Function/Application | Example/Note |

|---|---|---|

| MNE-Python | Open-source Python package for exploring, visualizing, and analyzing human neurophysiological data. Forms the core of the pipeline. | v1.6.0+ |

| scikit-learn | Provides tools for data splitting (train/test), standardization, and simple baseline machine learning models. | Required for pre-EEGNet validation. |

| EEGNet PyTorch/TF | The target deep learning model implementation. The pipeline output must match its input dimensions. | (Channels, Timepoints, Trials/Kernels) |

| BrainVision Recorder | Common commercial software for EEG data acquisition, generating .vhdr files. |

Alternative: LiveAmp, ANT Neuro, g.tec. |

| International 10-20 System Cap | Standardized electrode placement ensuring reproducibility across subjects and studies. | 32, 64, or 128 channels typical. |

| Conductive Electrolyte Gel | Reduces impedance at the electrode-scalp interface, improving signal quality. | Abralyt HiCl, Signa Gel. |

| High-Resolution ADC | Analog-to-digital converter in the amplifier; 24-bit resolution is now standard for wide dynamic range. | Key hardware specification. |

| Lab Streaming Layer (LSL) | Protocol for unified collection of time-series data, crucial for synchronizing EEG with stimulus markers. |

Workflow and Dataflow Visualization

EEG Processing Pipeline for EEGNet

Preprocessing Signal Flow

Within the broader thesis on optimized deep learning for Brain-Computer Interface (BCI) research, the EEGNet architecture represents a pivotal model. It is designed specifically for EEG signal classification, balancing performance with parameter efficiency for potential real-time applications. This protocol details its implementation, enabling reproducible research in cognitive state monitoring and neuropharmacological response assessment.

Core Architecture and Layer-by-Layer Implementation

The following code provides a complete, functional implementation of EEGNet in TensorFlow/Keras, adhering to the original specification with clear, commented layers.

Table 1: Reported EEGNet Performance on Benchmark BCI Datasets

| Dataset (BCI Paradigm) | Number of Subjects | Input Shape (Chans x Samples) | Reported Accuracy (Mean ± Std) | Key Comparison (vs. Traditional Methods) |

|---|---|---|---|---|

| BCI Competition IV-2a (Motor Imagery) | 9 | 22 x 1125 | 0.728 ± 0.16 | Outperformed Filter Bank Common Spatial Patterns (FBCSP) by ~4% |

| BCI Competition II-III (P300 Speller) | 2 | 64 x 256 | 0.885 ± 0.08 | Superior to Linear Discriminant Analysis (LDA) with fewer pre-processing steps |

| ERN (Error-Related Negativity) | 26 | 56 x 256 | 0.812 ± 0.12 | Showed robustness to per-subject variance without extensive calibration |

| SSVEP (High-Frequency) | 12 | 8 x 256 | 0.901 ± 0.05 | Competitive with Canonical Correlation Analysis (CCA) with better noise tolerance |

Experimental Protocol for Model Validation

Title: Protocol for Cross-Subject EEGNet Validation in Pharmaco-EEG Studies

Objective: To evaluate EEGNet's capability to detect drug-induced changes in EEG patterns across a cohort.

Materials: See "The Scientist's Toolkit" below.

Methodology:

Data Acquisition & Preprocessing:

- Record resting-state EEG from N=30 subjects pre- and post-administration of a neuroactive compound (e.g., a sedative) and placebo (double-blind, crossover design).

- Apply a bandpass filter (1-40 Hz) and a notch filter (50/60 Hz).

- Segment data into 4-second epochs (e.g., 512 samples at 128 Hz).

- Reference to common average. Apply artifact removal (e.g., ICA or automated rejection).

- Standardize per channel by subtracting mean and dividing by standard deviation.

Data Partitioning:

- Subject-wise split: Use data from 20 subjects for training, 5 for validation, and 5 for held-out testing.

- Label epochs as "Pre-Drug", "Post-Drug", or "Post-Placebo".

Model Training:

- Initialize EEGNet (nb_classes=3, Chans=[Your Channel Count], Samples=512).

- Use Adam optimizer (lr=1e-3), categorical crossentropy loss.

- Implement batch size of 64. Train for 300 epochs with early stopping (patience=30) based on validation loss.

- Apply data augmentation (e.g., small Gaussian noise, channel dropout).

Evaluation & Analysis:

- Report per-subject and grand average test accuracy, precision, recall, and F1-score.

- Generate a confusion matrix across conditions.

- Perform Gradient-weighted Class Activation Mapping (Grad-CAM) on the first convolutional layer to visualize spatiotemporal features the model deems salient for each condition.

Title: EEGNet Validation Workflow for Pharmaco-EEG

The Scientist's Toolkit

Table 2: Essential Research Reagents & Materials for EEGNet Experiments

| Item / Solution | Specification / Purpose | Function in Protocol |

|---|---|---|

| High-Density EEG System | 64+ channels, >500 Hz sampling rate (e.g., BioSemi, EGI) | Raw neural data acquisition with sufficient spatial-temporal resolution. |

| Electroconductive Gel | Chloride-based, low impedance (<10 kΩ) | Ensures stable electrical connection between scalp and electrode. |

| EEG Preprocessing Suite | MATLAB EEGLAB/Python MNE | Standardized pipeline for filtering, artifact removal, and epoching. |

| Neuroactive Compound | e.g., Modafinil (stimulant) or Clonidine (sedative) | Pharmacological probe to induce measurable, specific changes in EEG dynamics. |

| Placebo Control | Matched inert substance (e.g., lactose pill) | Controls for physiological and psychological effects of the administration procedure. |

| GPU Computing Resource | NVIDIA GPU (8GB+ VRAM) with CUDA support | Accelerates model training and hyperparameter optimization for deep networks. |

| TensorFlow & Keras | Versions 2.10+ | Core deep learning framework for implementing and training EEGNet. |

| Grad-CAM Visualization Script | Custom Python script using tf-keras-vis |

Interprets model decisions by highlighting contributive EEG features. |

Within a broader thesis investigating the optimization of EEGNet for brain-computer interface (BCI) applications, hyperparameter tuning is the critical process of systematically searching for the optimal model configuration. This protocol details the methodologies for tuning EEGNet's hyperparameters to maximize performance on specific EEG datasets, which is essential for both fundamental neuroscience research and applied contexts like neuropharmacology, where detecting subtle, drug-induced neural changes is paramount.

Key Hyperparameters for EEGNet: Definitions & Rationale

Kernel Length (Temporal): Length of the temporal convolution kernel. Must be aligned with the time-scale of relevant EEG features (e.g., event-related potentials, sensorimotor rhythms). Longer kernels may capture slower cortical potentials. F1 (Number of Temporal Filters): Defines the depth of the temporal convolution layer. Increasing F1 allows the network to learn a more diverse set of temporal filters but risks overfitting. D (Depth Multiplier): Controls the number of spatial filters per temporal filter in the depthwise convolution layer. Governs the model's capacity to learn complex spatial patterns from multiple EEG electrodes. Learning Rate: The step size for weight updates during gradient descent optimization. Critically influences convergence speed and final performance. Dropout Rate: Probability of randomly omitting units during training. A primary regularization technique to prevent overfitting, crucial for high-dimensional, low-sample-size EEG data. Batch Size: Number of samples processed before the model is updated. Affects training stability and gradient estimation. Optimizer Choice: Algorithm for updating weights (e.g., Adam, SGD). Determines the efficiency and path of convergence.

Quantitative Hyperparameter Search Space & Typical Ranges

Table 1: Standard Search Space for EEGNet Hyperparameter Tuning

| Hyperparameter | Typical Search Range / Options | EEGNet-8,4 (BCI IV 2a) Baseline | Impact on Model & Training |

|---|---|---|---|

| Kernel Length (Temporal) | 32 - 128 (samples) | 64 | Temporal feature resolution |

| F1 (Temporal Filters) | 8 - 64 | 8 | Temporal feature map depth |

| D (Depth Multiplier) | 1 - 4 | 2 | Spatial filter capacity |

| Learning Rate | 1e-4 - 1e-2 | 1e-3 | Convergence speed/stability |

| Dropout Rate | 0.25 - 0.75 | 0.5 | Regularization strength |

| Batch Size | 16, 32, 64, 128 | 64 | Gradient estimation stability |

| Optimizer | Adam, SGD with Momentum, AdamW | Adam | Update rule efficiency |

| Weight Decay (L2) | 1e-5 - 1e-3 | 1e-4 | Parameter regularization |

Detailed Experimental Protocols for Hyperparameter Optimization

Protocol 4.1: Grid Search for Core Architectural Parameters

Objective: Exhaustively evaluate combinations of kernel length, F1, and D. Procedure:

- Define Discrete Sets: kernel_length ∈ {32, 64, 128}, F1 ∈ {8, 16, 32}, D ∈ {1, 2, 4}.

- Hold Other Parameters Constant: Set learning rate=1e-3, dropout=0.5, batch size=64, optimizer=Adam.

- Train & Validate: For each combination, train EEGNet on the training set for a fixed number of epochs (e.g., 300) using stratified k-fold cross-validation (k=5).

- Evaluate: Record the mean validation accuracy and loss across all folds for each hyperparameter set.

- Select: Choose the configuration yielding the highest mean validation accuracy.

Protocol 4.2: Bayesian Optimization for Continuous Parameters

Objective: Efficiently search for optimal learning rate, dropout rate, and weight decay. Procedure:

- Define Search Space: learningrate ~ LogUniform(1e-4, 1e-2), dropoutrate ~ Uniform(0.25, 0.75), weight_decay ~ LogUniform(1e-5, 1e-3).

- Fix Architecture: Use the best-performing architecture from Protocol 4.1.

- Initialize Surrogate Model: Use a Gaussian Process or Tree-structured Parzen Estimator (TPE).

- Iterate (for n=50 trials): a. The surrogate model suggests a hyperparameter set. b. Train EEGNet with the suggested set for a reduced number of epochs (e.g., 100). c. Evaluate on the held-out validation set. d. Update the surrogate model with the (hyperparameters, validation accuracy) result.

- Finalize: Train the model with the best-found set for the full number of epochs.

Protocol 4.3: Evaluating Robustness via Nested Cross-Validation

Objective: Obtain an unbiased estimate of model performance after hyperparameter tuning. Procedure:

- Split the full dataset into K outer folds (e.g., K=5).

- For each outer fold i: a. Hold out fold i as the test set. b. Use the remaining K-1 folds as the optimization dataset. c. Perform Protocols 4.1 & 4.2 (Grid/Bayesian Search) on the optimization dataset using an inner k-fold split (e.g., k=4) to find the best hyperparameters. d. Train a final model on the entire optimization dataset using the best hyperparameters. e. Evaluate this final model on the held-out outer test set (fold i).

- Report: The mean and standard deviation of performance (accuracy, kappa) across all K outer test folds.

Visualization of Workflows

Hyperparameter Tuning & Validation Workflow

EEGNet Hyperparameter Influence Map

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Toolkit for EEGNet Hyperparameter Tuning Research

| Item / Reagent | Function & Rationale | Example / Specification |

|---|---|---|

| Standardized EEG Dataset | Provides a benchmark for comparing tuning results and ensuring reproducibility. | BCI Competition IV 2a, High-Gamma Dataset. |

| Deep Learning Framework | Enables efficient model definition, training, and hyperparameter search loops. | TensorFlow (>=2.10) / PyTorch (>=1.13) with GPU support. |

| Hyperparameter Optimization Library | Implements advanced search algorithms beyond manual/grid search. | Ray Tune, Optuna, or scikit-optimize. |

| High-Performance Computing (HPC) Cluster/Cloud GPU | Facilitates parallel evaluation of hundreds of hyperparameter sets in feasible time. | NVIDIA V100/A100 GPU, Google Colab Pro+. |

| Model Checkpointing & Logging | Saves model states and records all experimental metadata for traceability. | Weights & Biases (W&B), TensorBoard, MLflow. |

| Statistical Analysis Software | Performs significance testing on results from different hyperparameter sets. | SciPy (Python) or custom scripts for paired t-tests/ANOVA. |

| Data Version Control (DVC) | Tracks exact dataset versions used for each tuning experiment. | DVC (Data Version Control) integrated with Git. |

This document provides detailed application notes and protocols for the training phase of EEGNet, a compact convolutional neural network designed for Brain-Computer Interface (BCI) research. Within the broader thesis on EEGNet implementation, the selection of loss functions, optimizers, and validation strategies is critical for translating raw electroencephalography (EEG) signals into reliable control signals for communication or neuroprosthetic devices. These components directly impact model convergence, generalization to unseen user data, and ultimately, the real-world efficacy of the BCI system, with implications for clinical trials and therapeutic development.

Loss Functions: Theory and Application

Loss functions quantify the discrepancy between the model's predictions and the true labels, guiding the optimization process.

Common Loss Functions for EEG Classification

Cross-Entropy Loss: The standard for multi-class classification (e.g., discriminating between left-hand, right-hand, foot, and tongue motor imagery tasks).

Loss = -Σ y_true * log(y_pred)

Categorical Hinge Loss: Can sometimes offer better margins for separable classes.

Loss = max(0, 1 - y_true * y_pred)

Protocol: Loss Function Comparison Experiment

Objective: To empirically determine the optimal loss function for a 4-class Motor Imagery (MI) EEG dataset using EEGNet.

Materials: BCI Competition IV Dataset 2a, EEGNet model (as per the original paper), computing environment with PyTorch/TensorFlow.

Method:

- Data Preparation: Apply a bandpass filter (4-38 Hz) and epoch trials from -0.5s to 4.0s relative to cue onset. Standardize per channel.

- Model Initialization: Initialize three identical EEGNet models.

- Training:

- Train Model A with Categorical Cross-Entropy Loss.

- Train Model B with Categorical Hinge Loss.

- Common Constants: Batch size=64, optimizer=Adam (lr=0.001), validation split=0.2, max epochs=300 with early stopping.

- Evaluation: Record final validation accuracy, convergence speed (epochs to reach 95% of final accuracy), and training stability (loss variance).

Table 1: Quantitative Comparison of Loss Functions on MI Data

| Loss Function | Final Val. Accuracy (%) | Epochs to Convergence | Training Stability (σ of last 20 epochs) | Recommended Use Case |

|---|---|---|---|---|

| Categorical Cross-Entropy | 78.3 | 112 | 0.015 | General-purpose, probabilistic outputs. |

| Categorical Hinge Loss | 76.8 | 125 | 0.022 | Tasks requiring maximal class separation. |

Optimizers: Algorithms and Hyperparameter Tuning

Optimizers update model weights to minimize the loss function.

Adam (Adaptive Moment Estimation): Combines ideas from RMSProp and Momentum. Default recommended for EEGNet.

SGD with Nesterov Momentum: Stochastic Gradient Descent with a look-ahead momentum term. Can generalize better if tuned carefully.

Protocol: Optimizer Hyperparameter Optimization

Objective: To tune the learning rate (lr) and, for SGD, momentum for optimal performance.

Method:

- Define Search Grid:

- Adam: lr = [1e-2, 1e-3, 1e-4]

- SGD with Nesterov: lr = [1e-2, 1e-3, 5e-4]; momentum = [0.8, 0.9, 0.99]

- Procedure: For each optimizer-hyperparameter combination, train EEGNet on a fixed training subset (60% of total data). Use a separate validation subset (20%) for evaluation.

- Selection Criterion: Choose the configuration yielding the highest validation accuracy after 50 epochs (to limit computational cost).

Table 2: Optimizer Hyperparameter Performance

| Optimizer | Learning Rate | Momentum | Best Val. Acc. @50 epochs (%) |

|---|---|---|---|

| Adam | 1e-3 | N/A | 77.5 |

| Adam | 1e-4 | N/A | 72.1 |

| SGD (Nesterov) | 5e-4 | 0.9 | 76.8 |

| SGD (Nesterov) | 1e-3 | 0.99 | 75.2 |

Validation Strategies for Robust BCI Models

Robust validation is essential to prevent overfitting and estimate real-world performance.

Key Strategies

Subject-Specific vs. Subject-Independent: Critical distinction in BCI. Subject-specific uses data from the same subject for train/validation, while subject-independent validates on unseen subjects.

k-Fold Cross-Validation: Data is split into k folds. The model is trained on k-1 folds and validated on the remaining fold, repeated k times.

Leave-One-Subject-Out (LOSO): The gold-standard for subject-independent evaluation. Each subject's data serves as the test set once, while the model is trained on all other subjects.

Protocol: Implementing LOSO Cross-Validation

Objective: To obtain a realistic estimate of EEGNet's performance on novel, unseen users.

Method:

- Data Organization: Arrange dataset by subject (e.g., N=9 subjects).

- Iteration Loop: For subject

iin 1 to N:- Test Set: All trials from subject

i. - Training Set: All trials from subjects

1, ..., i-1, i+1, ..., N. - Validation Split: Randomly hold out 15% of the training set for validation and early stopping.

- Test Set: All trials from subject

- Training: Train a new EEGNet instance from scratch on the training set.

- Evaluation: Compute accuracy on the held-out test subject

i. - Aggregation: Report mean and standard deviation of accuracy across all N subjects.

Table 3: LOSO Cross-Validation Results (BCI Competition IV 2a)

| Subject | Test Accuracy (%) | Notes |

|---|---|---|

| S01 | 68.4 | |

| S02 | 51.2 | Low-performance subject. |

| S03 | 88.7 | |

| ... | ... | |

| S09 | 85.1 | |

| Mean ± SD | 76.2 ± 12.4 | Realistic generalization estimate. |

The Scientist's Toolkit: Key Research Reagents & Materials

Table 4: Essential Materials for EEGNet Training & Validation

| Item | Function/Description | Example/Supplier |

|---|---|---|

| Curated EEG Dataset | Benchmarked data for training and comparison. | BCI Competition IV Dataset 2a, OpenBMI. |

| Deep Learning Framework | Provides built-in functions for loss, optimizers, and autograd. | PyTorch, TensorFlow/Keras. |

| High-Performance Computing (HPC) Unit | Accelerates model training through parallel processing. | NVIDIA GPU with CUDA support. |

| Hyperparameter Optimization Library | Automates the search for optimal training parameters. | Optuna, Ray Tune. |

| Experiment Tracking Tool | Logs parameters, metrics, and model artifacts for reproducibility. | Weights & Biases, MLflow. |

| Statistical Analysis Software | Validates performance differences between protocols. | SciPy (Python), JASP. |

Visualized Workflows

Title: EEGNet Training Loop with Loss & Optimizer

Title: BCI Validation Strategy Decision Tree

This document provides detailed application notes and protocols for implementing EEGNet, a compact convolutional neural network architecture, within a real-time Brain-Computer Interface (BCI) pipeline. This work is situated within a broader thesis exploring the optimization of deep learning models for efficient, accurate, and low-latency decoding of electroencephalography (EEG) signals. The transition from offline validation to stable online operation presents unique challenges in signal processing, computational efficiency, and system integration, which are addressed herein.

Recent benchmarks (2023-2024) highlight EEGNet's performance on common BCI paradigms. The following table summarizes key metrics from recent implementations.

Table 1: EEGNet Performance on Standard BCI Paradigms (Online/Real-Time Configurations)

| BCI Paradigm | Dataset/Reference | Accuracy (%) | Latency (ms) | Model Size (MB) | Notes |

|---|---|---|---|---|---|

| Motor Imagery (MI) | BCI Competition IV 2a | 78.4 ± 3.1 | 125-150 | ~0.45 | 4-class, subject-dependent training |

| P300 Speller | BCI Competition III II | 94.2 ± 1.8 | 300-350* | ~0.42 | *Includes stimulus presentation period |

| Error-Related Potentials (ErrP) | MONITOR dataset (2023) | 86.7 ± 4.5 | < 200 | ~0.40 | Online feedback correction |

| Steady-State VEP (SSVEP) | BETA dataset | 91.5 ± 2.3 | 1500 | ~0.50 | Requires 1.5s data window |

Detailed Experimental Protocols

Protocol 3.1: Real-Time Data Acquisition & Preprocessing Pipeline

Objective: To acquire and preprocess EEG signals with minimal latency for online inference with EEGNet. Materials: EEG amplifier (e.g., Biosemi ActiveTwo, g.tec Unicorn), conductive gel/saline solution, active electrodes, a computer running acquisition software (e.g., Lab Streaming Layer, OpenVibe). Procedure:

- Hardware Setup: Position electrodes according to the international 10-20 system. For motor imagery, focus on C3, Cz, C4. For P300, include Fz, Cz, Pz, Oz, and occipital sites. Ensure electrode impedance is maintained below 10 kΩ.

- Stream Configuration: Configure the amplifier to stream raw data via TCP/UDP or a manufacturer-specific API. Set sampling rate to 128 Hz or 256 Hz as a balance between information content and computational load.

- Preprocessing (Online): a. Synchronization: Use a centralized clock (e.g., PtpClock) to align EEG data with stimulus markers. b. Filtering: Apply a 4-40 Hz bandpass FIR filter (zero-phase distortion via forward-backward filtering on chunks) in real-time. For P300, use 1-12 Hz. c. Referencing: Re-reference to common average reference (CAR) using a running buffer of all channels. d. Epoching: Upon a trigger event, extract the relevant time window (e.g., 0-800ms for P300, 0-4000ms for MI). e. Normalization: Apply online z-score normalization using a dynamic mean and standard deviation calculated from a rolling baseline buffer (e.g., last 60 seconds of data).

- Output: The processed epoch is formatted into a tensor of shape (Channels, Timepoints, 1) and passed to the inference engine.

Protocol 3.2: EEGNet Model Conversion & Optimization for Real-Time Inference

Objective: To convert a trained EEGNet model (typically from TensorFlow/PyTorch) into a format suitable for low-latency, real-time prediction. Procedure:

- Model Quantization: Apply post-training dynamic range quantization (TensorFlow Lite) or integer quantization (PyTorch Mobile) to reduce model size and accelerate inference. This typically reduces model size by 75% with minimal accuracy loss (<1%).

- Platform-Specific Compilation: For edge deployment (e.g., on a Raspberry Pi 4), compile the quantized model using TensorFlow Lite for Microcontrollers or ONNX Runtime with ARM NN acceleration.

- Inference Engine Integration: Embed the optimized model into a C++/Python inference loop. Critical steps include: a. Pre-allocate input and output tensors. b. Implement a double-buffering system: while one epoch is being preprocessed, the previous one is being classified. c. Set thread affinity to a dedicated CPU core to minimize jitter.

- Validation: Test the deployed model's inference time on the target hardware, ensuring it is less than 50% of the epoch length to maintain pipeline stability.

Protocol 3.3: Closed-Loop BCI Feedback Experiment

Objective: To validate the integrated EEGNet pipeline in a closed-loop, real-time BCI task (e.g., a motor imagery-based cursor control or P300 speller). Materials: Integrated BCI system, visual feedback display, participant consent forms. Procedure:

- Calibration (15 mins): Run a standard offline calibration session (e.g., 40 trials per class for MI) to collect user-specific data. Train or fine-tune EEGNet on this data.

- Model Integration (5 mins): Load the trained and optimized model into the real-time pipeline per Protocol 3.2.

- Online Session (20 mins): a. Present the participant with a task (e.g., "Imagine left hand movement to move cursor left"). b. Initiate trials with an auditory/visual cue. c. The system acquires EEG, processes it via Protocol 3.1, runs inference through EEGNet, and generates a command (e.g., "left"). d. Provide immediate visual feedback (e.g., cursor movement) based on the command. e. Log all data, including raw EEG, triggers, preprocessing outputs, model prediction, confidence score, and feedback timing.

- Metrics: Calculate online accuracy, information transfer rate (ITR in bits/min), and feedback delay. Monitor system stability (no crashes, missed buffers).

Visualizations of Workflows and Architectures

Real-Time EEGNet BCI Pipeline Flow

EEGNet Model Architecture for Real-Time BCI

The Scientist's Toolkit: Research Reagent Solutions & Essential Materials

Table 2: Essential Materials for Real-Time EEGNet BCI Implementation

| Item Name & Example | Function in Pipeline | Key Specifications for Real-Time Use |

|---|---|---|

| High-Density EEG Amplifier (e.g., Biosemi ActiveTwo, g.tec g.HIAMP) | Converts analog scalp potentials to digitized signals. | High input impedance (>1 GΩ), low noise (<1 µV), stable TCP/IP or USB stream with < 2ms latency. |

| Active Electrodes (e.g., BioSemi ActivePin, g.tec g.SCARAB) | Amplifies signal at the source, reducing motion artifact and environmental noise. | Integrated pre-amplifier, Ag-AgCl sintered surface, compatible with cap systems. |

| Conductive Gel/ Paste (e.g., SignaGel, SuperVisc) | Ensures stable, low-impedance electrical connection between electrode and scalp. | High chloride concentration, low viscosity for injection, long-duration stability (>8 hours). |

| Lab Streaming Layer (LSL) Framework | Synchronized, centralized streaming of time-series data (EEG) and event markers. | Must support C++, Python, and MATLAB bindings for integrating amplifier and stimulus software. |

| Model Optimization Toolkit (e.g., TensorFlow Lite, ONNX Runtime) | Converts trained EEGNet models to lightweight formats for fast inference on CPU/edge devices. | Support for INT8 quantization, operator compatibility with 1D/2D convolutions, and cross-compilation. |

| Stimulus Presentation Software (e.g., Psychtoolbox, OpenSesame, Presentation) | Presents visual/auditory cues with millisecond precision and sends event markers to the EEG stream. | Sub-millisecond timing accuracy, robust sync with LSL or parallel port triggers. |

| Dedicated Processing Computer | Runs the real-time preprocessing, inference, and feedback logic. | Multi-core CPU (e.g., Intel i7), minimal background processes, real-time OS kernel (or Linux with PREEMPT_RT). |

Solving Common EEGNet Implementation Challenges: Overfitting, Data Scarcity, and Poor Performance

Within EEGNet-based Brain-Computer Interface (BCI) research, the challenge of overfitting is paramount due to the high-dimensional, low-sample-size nature of EEG datasets. This document details application notes and experimental protocols for implementing three core regularization techniques—Dropout, Batch Normalization, and Data Augmentation—specifically within the EEGNet architecture for improved model generalization in BCI applications.

Quantitative Comparison of Regularization Techniques

Table 1: Comparative Efficacy of Regularization Techniques in EEGNet (Summarized from Recent Studies)

| Technique | Avg. Test Accuracy Increase (%) (vs. Baseline) | Avg. Reduction in Train-Test Accuracy Gap (%) | Computational Overhead | Recommended EEGNet Layer |

|---|---|---|---|---|

| Dropout (p=0.5) | 4.7 ± 1.2 | 15.3 ± 3.1 | Low | After Depthwise & Separable Conv |

| Batch Normalization | 5.9 ± 1.5 | 12.8 ± 2.8 | Moderate | Before Activation in each Conv block |

| Data Augmentation (Spectral) | 6.3 ± 1.8 | 18.5 ± 4.2 | High (Offline) | Applied to raw input signals |

| Combined (All Three) | 8.1 ± 2.1 | 22.7 ± 5.3 | High | Network-wide |

Experimental Protocols

Protocol 3.1: Implementing & Tuning Dropout in EEGNet

Objective: To determine the optimal dropout rate for the dense classification head of EEGNet to prevent co-adaptation of features.

- Initialization: Train a baseline EEGNet model (e.g., for MI or ERP classification) on your preprocessed dataset (e.g., BCI Competition IV 2a).

- Dropout Insertion: Insert a Dropout layer after the final Flatten layer and before the Dense output layer in the standard EEGNet architecture.

- Rate Sweep: Train separate models with dropout rates

p= [0.2, 0.3, 0.4, 0.5, 0.6, 0.7]. Use a fixed random seed for reproducibility. - Evaluation: For each model, record final test accuracy and the difference between final training and test accuracy over 150 epochs. Use 5-fold cross-validation.

- Analysis: Select the rate that maximizes test accuracy while minimizing the train-test accuracy gap. Plot accuracy curves to visualize regularization effect.

Protocol 3.2: Integrating Batch Normalization (BN) for Internal Covariate Shift

Objective: To stabilize and accelerate training of EEGNet by normalizing layer inputs.

- Architecture Modification: Insert a Batch Normalization layer immediately after each Convolutional layer (both Depthwise and Separable) and before the ELU activation function in the EEGNet pipeline.

- Training Adjustment: Increase the initial learning rate by a factor of 10 (e.g., from 1e-3 to 1e-2) as BN allows faster convergence. Use a learning rate scheduler.

- Mini-Batch Consideration: Ensure mini-batch size is sufficient (≥ 32 trials if possible). Small batches can lead to inaccurate batch statistics.

- Evaluation: Monitor the reduction in training epoch time and the smoothness of the validation loss curve compared to the baseline. Record the time to reach 95% of final accuracy.

Protocol 3.3: EEG-Specific Data Augmentation Pipeline

Objective: To artificially expand the training dataset using physiologically plausible transformations of EEG signals.

- Augmentation Suite Selection: Implement the following augmentations in a sequential pipeline:

- Temporal Warping: Randomly stretch/squeeze signal segments within a factor of 0.8-1.2.

- Gaussian Noise: Add noise with zero mean and SD = 0.1 * (signal SD).

- Spectral Dropout (F-Channels): Randomly set to zero 1-3 frequency channels in the time-frequency representation.

- Channel Dropout: Randomly set to zero 1-2 EEG channels across all time points.

- Online Application: Apply augmentations stochastically in real-time during training (using e.g., TensorFlow's

tf.dataor PyTorch'storchvision.transforms). Each epoch sees a slightly different dataset. - Validation: Ensure augmented samples are excluded from validation and test sets. The original, clean signals must be used for final model evaluation.

- Quantification: Report the effective virtual dataset size and the performance gain on held-out test subjects.

Visualizations

EEGNet Regularization Workflow

Diagnosing & Mitigating Overfitting Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Materials for EEGNet Regularization Experiments

| Item/Category | Function in Experiment | Example/Specification |

|---|---|---|

| Standardized EEG Dataset | Provides a benchmark for comparing regularization efficacy. | BCI Competition IV Dataset 2a (Motor Imagery), High-Gamma Dataset. |