Deep Learning for Brain Volumetry in Contrast-Enhanced MRI: Methods, Validation, and Clinical Applications

This article explores the application of deep learning (DL) for brain volumetry using contrast-enhanced MRI (CE-MRI), a significant area of interest for neuroscience research and drug development.

Deep Learning for Brain Volumetry in Contrast-Enhanced MRI: Methods, Validation, and Clinical Applications

Abstract

This article explores the application of deep learning (DL) for brain volumetry using contrast-enhanced MRI (CE-MRI), a significant area of interest for neuroscience research and drug development. It covers the foundational principles of extracting volumetric data from CE-MRI, a resource often underutilized due to technical heterogeneity. We delve into specific methodological approaches, including segmentation tools like SynthSeg+ and novel architectures for predicting contrast-equivalent information from non-contrast scans. The content addresses critical troubleshooting aspects, such as mitigating hallucinations and false positives in DL models, and performance optimization. Finally, it provides a comprehensive validation and comparative analysis of different DL techniques against traditional methods and ground truth, evaluating their reliability and clinical applicability. This synthesis aims to equip researchers and drug development professionals with a clear understanding of the current landscape, challenges, and future potential of DL-based brain volumetry.

The Foundation of Deep Learning Volumetry in Contrast-Enhanced MRI

Quantitative Evidence of Underutilization

Clinical guidelines increasingly support contrast-enhanced magnetic resonance imaging (CE-MRI) for various indications, but its adoption in clinical and research practice remains inconsistent. The data below summarize the key evidence of this underutilization.

Table 1: Evidence of CE-MRI Underutilization in Medical Practice

| Evidence Aspect | Quantitative Finding | Context & Source |

|---|---|---|

| Supplemental Breast MRI in High-Risk Women | Only 6.6% (158/2403) of high-lifetime-risk women received supplemental breast MRI screening within a 2-year window despite 43.9% attending a facility with on-site availability [1]. | Cross-sectional study of 422,406 screening mammograms across 86 U.S. facilities (2018 data) [1]. |

| Geographic Variability of CMR Access | 16 CMR centers per million U.S. Medicare beneficiaries, with state density ranging from 52.6 (MN, highest) to 4.4 (ME, lowest) per million [2]. | U.S. national analysis based on 2018 Medicare claims data [2]. |

| High-Volume Center Proficiency | 53% (59/112) of surveyed CMR centers were high-volume (>500 scans/year) in 2019, with these centers averaging 19 years of experience, compared to 3.5 years for low-volume centers [2]. | Society for Cardiovascular Magnetic Resonance (SCMR) survey data from 2017-2019 [2]. |

Core Principles and Protocol for DCE-MRI

Dynamic Contrast-Enhanced MRI (DCE-MRI) is a key CE-MRI technique that enables the quantitative assessment of tissue vascularity, permeability, and blood flow by tracking the kinetics of an injected contrast agent [3] [4].

Fundamental Physics and Mechanism of Action

- Contrast Agent Effect: Gadolinium-based contrast agents (GBCAs) are paramagnetic. They shorten the T1 (longitudinal) relaxation time of nearby water protons, resulting in increased signal intensity on T1-weighted images [5] [3].

- Blood-Brain Barrier (BBB) Leakage: In a healthy brain, the intact BBB prevents GBCAs from leaking into the brain tissue. In many neurological disorders, BBB disruption allows GBCAs to extravasate into the extravascular extracellular space (EES), causing visible enhancement and enabling permeability quantification [4].

Detailed DCE-MRI Acquisition Protocol

The following workflow outlines the standard procedure for acquiring DCE-MRI data.

DCE-MRI Data Analysis and Pharmacokinetic Modeling

Quantitative analysis uses pharmacokinetic (PK) models to convert signal intensity changes into physiological parameters [3] [4].

Table 2: Key Pharmacokinetic Parameters in DCE-MRI

| Parameter | Physiological Meaning | Interpretation |

|---|---|---|

| Ktrans (volume transfer constant) | Permeability-surface area product per unit volume of tissue [4]. | High Ktrans indicates increased vascular permeability or blood flow, common in tumors with angiogenesis [4]. |

| Ve (extravascular extracellular volume) | Fractional volume of the extravascular extracellular space (EES) [4]. | Represents the fractional volume of the EES [4]. |

| kep (rate constant) | Flux rate constant between EES and blood plasma (kep = Ktrans/Ve) [4]. | Reflects the washout rate of the contrast agent from the EES back to the bloodstream [4]. |

| Vp (plasma volume) | Fractional blood plasma volume [4]. | Represents the fractional volume of blood plasma in the tissue [4]. |

Application Notes & Experimental Protocols

Application in Preclinical Drug Development

CE-MRI, particularly DCE-MRI, provides objective, quantitative biomarkers crucial for central nervous system (CNS) drug development [6].

- Phase I Trials: DCE-MRI can demonstrate CNS penetration and confirm target engagement for drugs that may not be amenable to positron emission tomography (PET) imaging [6].

- Phase II/III Trials: The technique can differentiate objective measures of drug efficacy from placebo response, a major challenge in CNS trials. It helps identify responders versus non-responders and can provide insights into disease modification [6].

Sample Experimental Protocol: Evaluating Anti-Angiogenic Therapy in Glioblastoma

- Subject Preparation: Animal model or human patient with a confirmed glioma.

- Baseline DCE-MRI: Perform DCE-MRI as detailed in Section 2.2.

- Therapy Administration: Administer the anti-angiogenic drug candidate.

- Follow-up DCE-MRI: Repeat the DCE-MRI at predetermined time points (e.g., 2 weeks, 4 weeks).

- Data Analysis: Calculate Ktrans, Ve, and Vp maps. A successful therapeutic response is indicated by a significant decrease in Ktrans values in the tumor region, reflecting reduced vascular permeability and angiogenesis [4].

Deep Learning for Contrast Enhancement and Volumetry

Emerging deep learning (DL) techniques aim to overcome CE-MRI limitations, such as the need for gadolinium and long acquisition times [7] [8].

- Gadolinium-Free Contrast Mapping: DL models can now generate synthetic contrast-enhanced maps or estimate cerebral blood volume (CBV) from a single, non-contrast T1-weighted scan, eliminating patient risk from gadolinium administration [7].

- Rapid, Automated Volumetry: DL enables fully automatic segmentation of brain volumes from MRI, even in challenging preclinical settings. This dramatically reduces acquisition times (e.g., from >12 minutes to ~4.3 minutes for mouse brain volumetry) and provides robust, high-throughput analysis for longitudinal studies [8].

Sample Experimental Protocol: Deep Learning-Based Brain Volumetry in Neurodegeneration

- Data Acquisition: Acquire high-resolution T2-weighted images (or other modalities) according to the optimized, fast protocol enabled by DL reconstruction [8].

- Preprocessing: Perform skull-stripping and image registration to a standard atlas space using automated DL tools [8].

- Semantic Segmentation: Input preprocessed images into a trained neural network (e.g., 3D CNN, Mamba-based model) for voxel-wise classification [7] [8].

- Volumetric Calculation: Compute the total brain and sub-region (e.g., hippocampus, caudate putamen) volumes from the segmentation masks [8].

- Longitudinal Analysis: Track volume changes over time to assess disease progression or treatment effects in models of Alzheimer's disease, multiple sclerosis, etc. [8].

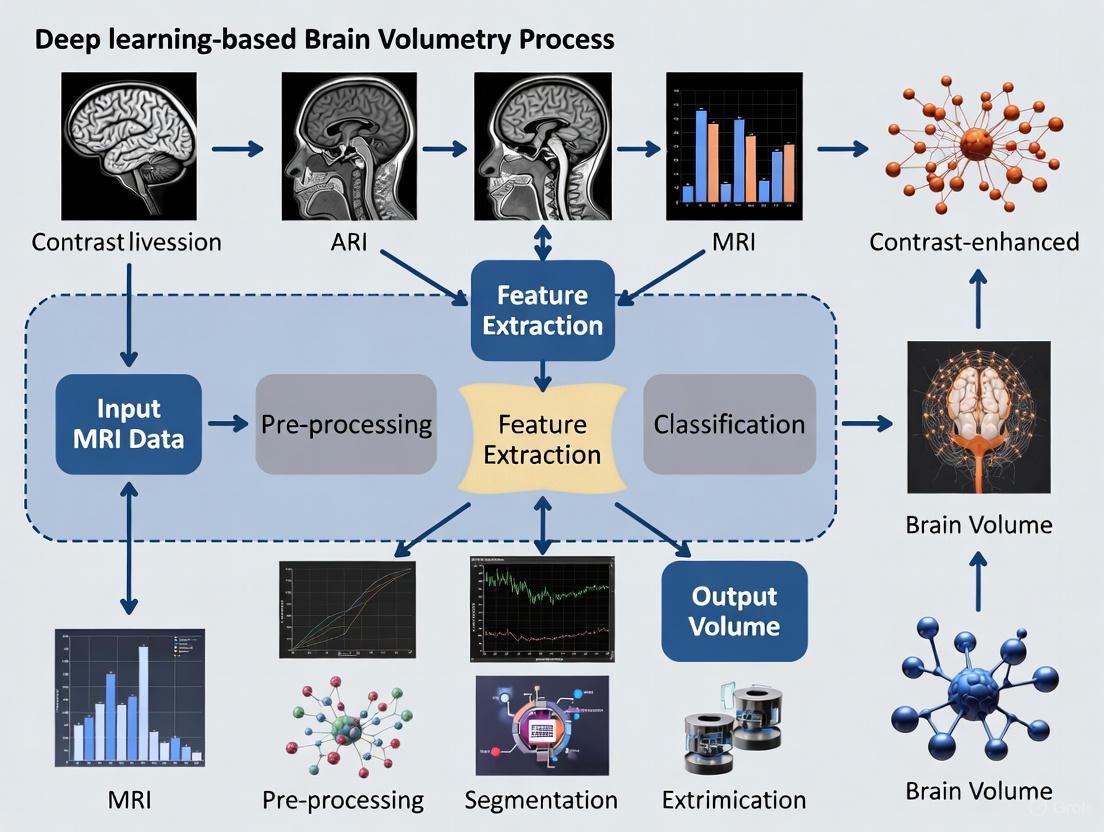

The diagram below illustrates this integrated deep learning workflow.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for CE-MRI Research

| Item | Function/Description | Key Considerations |

|---|---|---|

| Gadolinium-Based Contrast Agent (GBCA) | Pharmaceutical that shortens T1 relaxation time to create image contrast [5] [3]. | Choose between linear/macrocyclic and ionic/non-ionic types based on conditional stability and NSF risk profile [5]. Macrocyclic agents generally have higher stability [5]. |

| Power Injector | Ensures a precise, rapid, and reproducible intravenous bolus injection of the GBCA. | Critical for consistent DCE-MRI data acquisition and reliable pharmacokinetic modeling [3] [4]. |

| Pharmacokinetic Modeling Software | Software that fits PK models (e.g., Extended Toft, Patlak) to dynamic data to compute parameters like Ktrans and Ve [4]. | Model selection is crucial; the Patlak model is often recommended for subtle BBB leakage in neurodegeneration [4]. |

| Deep Learning Segmentation Framework | A trained neural network (e.g., 3D U-Net) for automated, voxel-wise labeling of brain anatomical regions [8]. | Dramatically increases analysis throughput and reproducibility for brain volumetry studies compared to manual segmentation [8]. |

| Reference Region Atlas | A standardized template with pre-defined anatomical boundaries for different brain regions. | Essential for registration-based segmentation and for validating the accuracy of automated DL segmentation methods [8]. |

Technical heterogeneity in Magnetic Resonance Imaging (MRI) presents a fundamental challenge for the development and deployment of robust deep learning (DL) tools in neuroscience research and drug development. Variability across scanners, vendors, acquisition protocols, and sites introduces confounding noise that can obscure biological signals, compromise biomarker validity, and reduce the generalizability of predictive models. For DL-based brain volumetry and contrast-enhanced MRI research, this heterogeneity directly impacts measurement reproducibility, potentially leading to inaccurate assessment of therapeutic efficacy in clinical trials. The "Cycle of Quality" framework emphasizes that addressing these technical confounds is not merely a preprocessing step but an essential, integrated process spanning from acquisition to analysis [9]. This Application Note provides detailed protocols and analytical frameworks to identify, quantify, and mitigate these sources of variation, enabling more reliable and reproducible DL-driven biomarkers.

Technical heterogeneity manifests across multiple dimensions of the MRI acquisition pipeline. The tables below summarize key sources of variability and their documented impact on quantitative outcomes.

Table 1: Primary Sources of Technical Heterogeneity in MRI Acquisition

| Source Category | Specific Parameters | Impact on Quantitative MRI |

|---|---|---|

| Hardware | Scanner Manufacturer & Model, Magnetic Field Strength (e.g., 3T vs. 7T), RF Coil Type (Conventional vs. Cryogenic) | Affects fundamental signal-to-noise ratio (SNR), spatial resolution, and geometric distortion [8] [9]. |

| Sequence Protocol | Pulse Sequence Type (SE, GRE, RARE), Timing Parameters (TR, TE), Flip Angle, Voxel Dimensions | Directly influences image contrast, SNR, and the relationship between signal intensity and underlying tissue properties (e.g., T1, T2) [10]. |

| Reconstruction & Processing | Reconstruction Algorithm, Use of Parallel Imaging, Post-processing Filters, Vendor-specific Software | Introduces variation in noise texture, sharpness, and can create artifacts that may be learned by DL models as false features [9]. |

| Phantom & Calibration | Phantom Composition, Calibration Schedule, Quality Control Procedures | Leads to scanner-specific drifts in quantitative values over time, affecting longitudinal study reliability [9]. |

Table 2: Documented Performance of Deep Learning Models Under Heterogeneous Conditions

| DL Application | Model Input/Strategy | Key Metric | Performance Outcome | Context of Heterogeneity |

|---|---|---|---|---|

| CBV Map Synthesis [11] | Single-modal non-contrast scan | N/A (Qualitative Validation) | Identified functional abnormalities in aging and Alzheimer's disease brains. | Trained on quantitative steady-state contrast-enhanced MRI to overcome variability in radiological scans. |

| Mouse Brain Volumetry [8] | T2-weighted images (4.3 min acquisition) | Reproducibility in Healthy Mice | Reliable quantification of hippocampus, caudate putamen, and cerebellum volumes. | High spatial resolution (78x78x250 μm³) challenge at 7T; DL enabled fast, consistent segmentation. |

| Gadolinium-Free CE-MRI [12] | NC-MRI (T2w, DWI, Pre-contrast T1w) | Sensitivity/Specificity for HCC | 0.866 / 0.922 (vs. 0.899 / 0.925 for conventional CE-MRI) | Model generalized across three institutions, synthesizing multiple contrast phases from non-contrast inputs. |

| Synthetic Post-Contrast T1 [11] | Multi-parametric MRI (T1w, T2w, FLAIR, DWI, SWI) | PSNR / SSIM | 22.967 ± 1.162 / 0.872 ± 0.031 (BayesUNet) | Comprehensive input protocol designed to capture diverse tissue contrasts for robust synthesis. |

Experimental Protocols for Harmonization and Validation

Protocol 1: Prospective Multi-Scanner Harmonization

This protocol outlines a framework for standardizing acquisition across multiple sites or scanners, a critical step for multi-center clinical trials.

A. Pre-Study Calibration and Phantom Imaging

- Objective: To establish a baseline for signal and geometric fidelity across all scanners in the network.

- Materials: Standardized imaging phantom (e.g., ADNI phantom or custom design with known relaxation properties and geometric features).

- Methodology:

- Protocol Translation: Convert the core research sequence (e.g., T1-weighted 3D MPRAGE) into vendor-specific implementations (e.g., "BRAVO" on GE, "MPRAGE" on Siemens, "3D T1 FFE" on Philips) while keeping core parameters (TR, TE, TI, resolution) as consistent as possible.

- Phantom Imaging: Perform weekly phantom scans on each participating scanner using the translated protocols over a one-month stability period.

- Metric Extraction: Analyze phantom data to quantify:

- Signal-to-Fluctuation-Noise Ratio (SFNR): For temporal stability.

- Geometric Distortion: Measure known distances in the phantom versus acquired images.

- Intensity Uniformity: Profile signal variation across the field-of-view.

- Validation: Inter-scanner coefficient of variation (CoV) for all extracted metrics should be <5% before proceeding to in-vivo imaging.

B. In-Vivo Traveling Subject Study

- Objective: To quantify inter-scanner variability on biological measurements.

- Methodology:

- Recruit a small cohort (n=3-5) of "traveling subjects" who can be scanned on all participating scanners within a short time window (e.g., 2 weeks).

- Acquire the full imaging protocol on each subject at each site.

- Process data through a centralized, standardized pipeline for brain extraction, tissue segmentation (GM, WM, CSF), and regional volumetry (e.g., of the hippocampus).

- Analysis: Calculate the intra-class correlation coefficient (ICC) and CoV for key volumetric outputs (e.g., total brain volume, hippocampal volume) across scanners. An ICC > 0.9 is considered excellent for multi-center studies.

Protocol 2: Retrospective Harmonization using Deep Learning

For existing datasets where prospective harmonization was not feasible, this protocol uses DL to mitigate site effects.

A. Data Preprocessing and Feature Extraction

- Objective: To prepare heterogeneous data for harmonization.

- Methodology:

- Standardized Preprocessing: Apply a consistent pipeline to all datasets, including N4 bias field correction, intensity-based brain extraction, and affine registration to a standard template (e.g., MNI space).

- Feature Extraction: For volumetry tasks, use a pre-trained DL segmentation model (e.g., a U-Net variant) to generate regional volume maps. For intensity-based tasks, extract features from the normalized native space images.

B. Harmonization Model Training (ComBat-GAN)

- Objective: To remove technical site/scanner effects while preserving biological variance.

- Methodology:

- Apply Statistical Harmonization: Use a validated method like ComBat (or its Bayesian extension) to remove site effects from the extracted features or volumetric maps. This model adjusts for location and scale (mean and variance) differences between sites.

- Train Generative Adversarial Network (GAN): To synthesize harmonized images directly, train a CycleGAN or similar architecture. The model learns a mapping between images from different sites, effectively translating a scan from "Scanner A style" to "Scanner B style" or to a common harmonized "style".

- Input: Paired (or unpaired) patches from different scanners/sites.

- Generator Loss: Combination of adversarial loss and cycle-consistency loss.

- Output: Harmonized image patches that retain subject-specific biology but exhibit consistent image properties.

- Validation: Demonstrate that a DL classifier can no longer predict the scanner source from the harmonized data with accuracy above chance, while performance on a biological task (e.g., patient vs. control classification) is maintained or improved.

Visualization of Workflows and Logical Frameworks

The Quality Cycle in MRI Research

This diagram illustrates the integrated, cyclical process required to achieve and maintain quality in multi-site MRI research, from initial concept to disseminated results.

Technical Validation Pathway for DL Volumetry

This workflow outlines the specific steps for technically validating a deep learning brain volumetry pipeline against a ground truth reference method.

The Scientist's Toolkit: Key Research Reagents & Materials

Table 3: Essential Resources for Managing MRI Heterogeneity in DL Research

| Tool Category | Specific Tool / Reagent | Function & Utility | Key Considerations |

|---|---|---|---|

| Reference Phantoms | ADNI Phantom; Custom QMRI Phantoms | Provides a stable ground truth for scanner calibration and longitudinal monitoring of scanner drift [9]. | Phantoms should mimic tissue relaxation properties (T1, T2) and be MRI-safe for the long term. |

| Standardized Atlases | Mouse Brain ATLAS (e.g., from [8]); Human Brain Templates (MNI, ICBM) | Serves as a common coordinate system for spatial normalization and inter-subject registration, crucial for comparative analysis. | Atlas choice should be appropriate for the study population (e.g., age, species, disease). |

| Harmonization Software | ComBat (and its variants); Pulseq [9] | ComBat: Statistically removes batch effects from extracted features. Pulseq: Enables vendor-neutral, reproducible sequence programming. | ComBat requires careful modeling to avoid removing biological signal. Pulseq needs vendor approval/installation. |

| Deep Learning Frameworks | U-Net Architectures; Stable Diffusion Models [12]; Generative Adversarial Networks (GANs) | U-Net: Gold-standard for segmentation tasks. GANs: Used for data augmentation [14] and image harmonization. Stable Diffusion: Can synthesize contrast-enhanced images from non-contrast inputs [12]. | Models must be trained on diverse, well-characterized datasets to ensure generalizability. |

| Validation Datasets | Traveling Human / Mouse Data; Multi-site Public Databases (e.g., ADNI) | Provides the "ground truth" for inter-scanner variability, allowing for quantitative assessment of harmonization methods. | Traveling subject studies are the gold standard but are resource-intensive to execute. |

Technical heterogeneity in MRI is a formidable but manageable challenge. Through the systematic application of prospective harmonization protocols, robust retrospective data cleaning techniques, and the strategic use of deep learning models designed for domain adaptation, researchers can extract reliable, reproducible quantitative biomarkers. The frameworks and toolkits provided here offer a concrete pathway for achieving this goal. As the field moves forward, embracing the "Cycle of Quality" and embedding these practices into the core of research and drug development workflows will be paramount for translating promising DL-based imaging biomarkers into validated tools for clinical trials and patient care.

Brain morphometry, the quantitative study of brain structure, is a critical neuroimaging biomarker for diagnosing and monitoring neurological and neurodegenerative diseases. In clinical practice, a significant portion of magnetic resonance imaging (MRI) examinations include contrast-enhanced (CE-MR) sequences, primarily for improving pathological lesion detection. However, the reliability of CE-MR scans for quantitative morphometric analysis has remained uncertain due to potential signal alteration from gadolinium-based contrast agents. This application note examines the physics basis underlying this question and demonstrates, through quantitative evidence and protocol details, that advanced deep learning methods now enable reliable morphometry from CE-MR scans, thereby expanding the potential dataset for neuroscience research and drug development.

Quantitative Evidence: Comparative Performance of Segmentation Tools

Table 1: Reliability of Volumetric Measurements Between CE-MR and NC-MR Scans [15] [16]

| Brain Structure | Segmentation Tool | Intraclass Correlation Coefficient (ICC) | Notes |

|---|---|---|---|

| Most Brain Regions | SynthSeg+ | > 0.90 | Demonstrates high reliability for most structures |

| Larger Brain Structures | SynthSeg+ | > 0.94 | Even stronger agreement in larger volumes |

| Brain Stem | SynthSeg+ | > 0.90 (lowest, but robust) | Shows the lowest, yet still high, correlation |

| Global Gray Matter | CAT12 | ICC = 0.87 | Good agreement |

| Hippocampus | CAT12 | ICC = 0.57 | Poor agreement |

| Amygdala | CAT12 | ICC = 0.45 | Poor agreement |

| CSF & Ventricular Volumes | SynthSeg+ | Discrepancies noted | Systematic differences observed |

Table 2: Scan-Rescan Reliability of Volumetric Tools Across Multiple Scanners [17]

| Software Solution | Median CV for GM Volume | Median CV for WM Volume | Median CV for Total Brain Volume | Performance Category |

|---|---|---|---|---|

| AssemblyNet | < 0.2% | < 0.2% | 0.09% | High Reliability |

| AIRAscore | < 0.2% | < 0.2% | 0.09% | High Reliability |

| FastSurfer | > 0.2% | < 0.2% | > 0.2% | Moderate Reliability |

| FreeSurfer | > 0.2% | > 0.2% | > 0.2% | Moderate Reliability |

| SPM12 | > 0.2% | > 0.2% | > 0.2% | Moderate Reliability |

| syngo.via | > 0.2% | > 0.2% | > 0.2% | Moderate Reliability |

| Vol2Brain | > 0.2% | > 0.2% | > 0.2% | Moderate Reliability |

The quantitative evidence confirms that with appropriate tool selection, CE-MR scans can reliably support morphometry. The deep learning-based tool SynthSeg+ demonstrates exceptionally high consistency between CE-MR and non-contrast MR (NC-MR) scans, with Intraclass Correlation Coefficients (ICCs) exceeding 0.90 for most brain structures [15] [16]. In contrast, traditional tools like CAT12 show inconsistent performance, with poor reliability for smaller structures like the hippocampus and amygdala (ICC < 0.60) [15] [16].

For longitudinal studies, scan-rescan reliability is paramount. Recent multi-scanner assessments reveal that modern AI-based tools AssemblyNet and AIRAscore achieve superior precision, with median coefficients of variation (CV) for gray matter (GM), white matter (WM), and total brain volume all below 0.2% [17]. The study found that the choice of software has a stronger effect on measurement variance than the scanner hardware itself [17].

Experimental Protocols for Validating CE-MR Morphometry

Core Experimental Workflow

The following diagram illustrates the key steps for a validation experiment comparing morphometric measurements from CE-MR and NC-MR scans.

Detailed Methodology

1. Participant Cohort & Image Acquisition:

- Cohort: A typical study should include clinically normal participants across a wide age range (e.g., 21-73 years) to assess generalizability. A sample size of approximately 60 subjects provides robust power for reliability analysis [15] [16].

- MRI Protocol: Acquire paired T1-weighted NC-MR and CE-MR scans for each participant in a single session. For CE-MR, use a standard gadolinium-based contrast agent (e.g., Gd-DTPA) with a dose of 0.25 mL/kg, injected via cubital vein at 1 mL/s, followed by a 3-minute delay before scanning [18]. Ensure consistent sequence parameters (e.g., 3D FFE, matrix=256×256, 1mm isotropic voxels) between the two scan types [18].

2. Image Preprocessing Pipeline:

- Resampling: Standardize the spatial resolution of all MRI scans to a uniform isotropic voxel size (e.g., 0.833 mm³) to minimize variability [18].

- Skull Stripping: Remove non-brain tissues using an advanced, robust tool like SynthStrip to reduce computational overhead and focus analysis on brain tissue [18].

- Intensity Normalization: Apply Z-score normalization to standardize voxel intensities across subjects, mitigating inter-scanner and inter-subject variability [18].

- Quality Control: Implement an automated verification step to ensure alignment between MRI scans and segmentation masks. Visually inspect intensity histograms to ensure consistency [18].

3. Segmentation and Volumetric Analysis:

- Tool Selection: Employ a combination of segmentation tools for comparison, prioritizing deep learning-based methods (e.g., SynthSeg+, AssemblyNet) known for their robustness to contrast variation [15] [16] [17].

- Execution: Process all NC-MR and CE-MR scans through the selected pipelines to extract volumetric measurements for key brain structures (global GM, WM, ventricles, and subcortical nuclei).

4. Statistical Validation:

- Reliability Analysis: Calculate Intraclass Correlation Coefficients (ICCs) between measurements from CE-MR and NC-MR scans for all segmented structures. ICC values > 0.90 are considered indicative of high reliability [15] [16].

- Scan-Rescan Variability: For studies across multiple scanners, calculate the percentage Coefficient of Variation (%CV) to assess the precision of each software tool. A lower CV indicates higher reliability [17].

- Bland-Altman Plots: Use these plots to visualize the agreement between CE-MR and NC-MR measurements and identify any systematic biases [17].

- Downstream Validation: Build age prediction models or correlate volumetric measures with clinical severity scores (e.g., WAB-R for aphasia) using both CE-MR and NC-MR-derived data. Comparable model performance further validates the utility of CE-MR scans [15] [19].

The Scientist's Toolkit: Essential Research Reagents & Software

Table 3: Key Software Solutions for Deep Learning-Based Morphometry

| Tool Name | Type/Function | Key Application in CE-MR Research |

|---|---|---|

| SynthSeg+ | Deep learning-based segmentation tool | Robust brain volume segmentation from both CE-MR and NC-MR scans; high ICC reliability [15] [16]. |

| 3D U-Net (with ResNet-34) | Convolutional Neural Network architecture | Volumetric medical image segmentation (e.g., for brain metastases); uses patch-based training [18]. |

| AssemblyNet | AI-based volumetric tool | Provides high scan-rescan reliability (CV < 0.2%) for GM, WM, and total brain volume [17]. |

| AIRAscore | Certified medical device software | Demonstrates high precision in longitudinal volumetry (CV < 0.2%) across different scanners [17]. |

| FreeSurfer | Established morphometry pipeline | Widely used for generating silver-standard ground truth; provides comprehensive cortical and subcortical metrics [20]. |

| CAT12 | SPM-based segmentation toolbox | Traditional tool for voxel-based morphometry; shows inconsistent performance on CE-MR scans [15] [16]. |

The physics basis for utilizing CE-MR scans in morphometry is robust when supported by modern deep learning methodologies. Evidence confirms that advanced tools like SynthSeg+, AssemblyNet, and AIRAscore can mitigate the effects of contrast agent, enabling reliable volumetric measurements from clinically acquired CE-MR images. This breakthrough significantly expands the potential pool of data for large-scale neuroimaging research and drug development trials by allowing the quantitative use of vast existing clinical datasets. For conclusive results, researchers must adhere to standardized protocols, prioritize deep learning-based segmentation tools, and maintain consistency in scanner-software combinations throughout their studies. Future work will focus on refining models to reduce remaining discrepancies in CSF and ventricular volumes and further validating these approaches in specific patient populations.

Deep Learning's Role in Learning Non-Linear Mappings for Volumetry

Deep learning (DL) has emerged as a transformative technology for brain volumetry, primarily through its capacity to learn complex, non-linear mappings from medical images to quantitative volumetric outputs. These mappings enable the direct estimation of brain structure volumes from input data, bypassing traditional intermediate steps that often require manual intervention or simplified linear models. The non-linear nature of deep neural networks allows them to capture intricate relationships within image data that conventional algorithms might miss, leading to more accurate and robust volumetry across diverse patient populations and imaging protocols. This capability is particularly valuable in neurodegeneration research and drug development, where precise measurement of brain structures serves as a critical biomarker for disease progression and therapeutic efficacy [21].

DL models learn these mappings through a training process where network parameters are iteratively adjusted to minimize the difference between predicted volumetric outputs and known ground-truth labels. This process allows the models to identify and leverage subtle patterns in the imaging data, such as textural variations and shape descriptors, that correlate with anatomical boundaries. For clinical researchers and drug development professionals, this technology offers two significant advantages: the automation of labor-intensive manual segmentation processes and the ability to extract additional quantitative information from standard clinical scans that would otherwise require specialized acquisition protocols or contrast agents [11] [22].

Key Applications and Experimental Data

Table 1: Performance Metrics of Deep Learning Volumetry Applications

| Application Area | Key Metric | Performance Value | Reference/Model |

|---|---|---|---|

| MRI Acceleration | Scan Time Reduction | 2x faster (1 min 10 s vs. 4 min 59 s) | DL-Speed [23] |

| MRI Acceleration | Total GM Volume Correlation | r = 0.99 (p < 0.001) | DL-Speed [23] |

| MRI Acceleration | Hippocampal Occupancy Score | 0.68 ± 0.17 (no significant difference) | SubtleMR + NeuroQuant [22] |

| Contrast-Free CBV Mapping | Peak Signal-to-Noise Ratio | 22.967 ± 1.162 | BayesUNet [11] |

| Contrast-Free CBV Mapping | Structural Similarity Index | 0.872 ± 0.031 | BayesUNet [11] |

| DCE-MRI Analysis | Computational Time Reduction | 17 s vs. 15 min | CNNCON [24] |

| DCE-MRI Analysis | Ktrans MAE | (111 ± 70) × 10⁻⁵ min⁻¹ | CNNCON [24] |

| CT Volumetry | Dementia vs Control Differentiation | High accuracy (comparable to MRI) | U-Net [25] |

Protocol Acceleration and Contrast Agent Elimination

A primary application of non-linear mapping in volumetry is the significant acceleration of MRI acquisition protocols. Watanabe et al. demonstrated that a deep learning-based reconstruction technique (DL-Speed) enables approximately one-minute 3D T1-weighted imaging, reducing scan times from nearly five minutes to just over one minute while preserving quantitative integrity for morphometric analysis [23]. This acceleration directly addresses patient motion artifacts, with the DL-Speed protocol showing significantly reduced head motion (Total Vector Change: 52.3 ± 9.4 mm vs. 140.4 ± 32.8 mm, p < 0.001) while maintaining acceptable image quality for cortical thickness and gray matter volume measurements [23]. Independent validation of FDA-cleared DL software (SubtleMR) confirmed that 2x faster scan times produce hippocampal occupancy scores and volumetric measures with no statistical difference from standard protocols, demonstrating strong generalizability across five different 3T scanners [22].

Beyond acceleration, DL has enabled the complete elimination of gadolinium-based contrast agents (GBCAs) from certain functional imaging protocols. Liu et al. developed a deep learning model that maps single-modal non-contrast MRI scans to synthetic cerebral blood volume (CBV) maps, effectively substituting for GBCAs in identifying functional abnormalities in aging and Alzheimer's disease brains [11]. This approach addresses rising safety concerns regarding gadolinium retention in patients' bodies while leveraging the more readily available non-contrast MRI scans from databases like the Alzheimer's Disease Neuroimaging Initiative (ADNI) [11]. The model was first trained and optimized in mice before being transferred and adapted to humans, demonstrating the cross-species applicability of the learned non-linear mappings [11].

Cross-Modality Volumetry and Preclinical Applications

Deep learning has also enabled accurate brain volumetry from computed tomography (CT) scans, despite their traditionally lower soft-tissue contrast compared to MRI. A study analyzing 917 CT and 744 MR scans from the Gothenburg H70 Birth Cohort developed a U-Net model that segments gray matter, white matter, and cerebrospinal fluid directly from cranial CT images [25]. The resulting CT-based volumetric measures (CTVMs) differentiated cognitively healthy individuals from dementia and prodromal dementia patients with accuracy levels comparable to MR-based measures and showed significant associations with cognitive tests and biochemical markers of neurodegeneration [25]. This approach makes quantitative volumetry accessible in settings where MRI is unavailable or contraindicated.

In preclinical research, DL-based volumetry facilitates high-throughput longitudinal studies in mouse models of neurodegeneration. A recently developed approach utilizes a deep-learning segmentation pipeline to quantify total brain and sub-region volumes (hippocampus, caudate putamen, cerebellum) from T2-weighted images acquired in just 4.3 minutes at 7 Tesla [26]. This dramatic reduction in acquisition time enhances animal welfare (adhering to 3R principles) while enabling reliable tracking of neurodegenerative processes in models of amyotrophic lateral sclerosis, cuprizone-induced demyelination, and multiple sclerosis [26]. The robust automatic segmentation validates the transferability of non-linear mapping approaches across species.

Detailed Experimental Protocols

Protocol for Contrast-Free CBV Mapping

Table 2: Key Research Reagents and Solutions

| Item Name | Function/Application |

|---|---|

| Quantitative steady-state contrast-enhanced MRI datasets | Training data for deep learning model to learn CBV mapping [11] |

| Non-contrast MRI scans (T1-weighted) | Input data for trained model to generate synthetic CBV maps [11] |

| Alzheimer's Disease Neuroimaging Initiative (ADNI) data | Validation dataset for model performance in Alzheimer's disease [11] |

| 3D patch-based Mamba model | Deep learning architecture for estimating cerebral blood volume [7] |

Objective: To generate synthetic cerebral blood volume (CBV) maps from single-modal non-contrast MRI scans, eliminating the need for gadolinium-based contrast agents [11].

Experimental Workflow:

Data Preparation:

- Collect a large-scale dataset of paired quantitative steady-state contrast-enhanced structural MRI and non-contrast MRI scans.

- Ensure consistent scaling across subjects to minimize inter-subject variability, as quantitative maps preserve scaling with respect to post-contrast images.

- Divide data into training, validation, and test sets, ensuring no subject overlap between sets.

Model Training:

- Implement a 3D patch-based Mamba model or similar architecture specifically designed for this volumetric mapping task [7].

- Train the model to learn the non-linear mapping from non-contrast T1-weighted input images to contrast-equivalent CBV output maps.

- Use quantitative CBV maps derived from actual contrast-enhanced scans as the ground truth during supervised training.

- Optimize model parameters by minimizing a loss function that combines voxel-wise error and perceptual similarity metrics.

Validation:

- Apply the trained model to held-out test datasets of non-contrast scans from aging and Alzheimer's disease brains.

- Quantitatively compare the synthetic CBV maps against ground truth CBV maps from contrast-enhanced scans using metrics like Peak Signal-to-Noise Ratio (PSNR) and Structural Similarity Index (SSIM) [11].

- Validate the clinical utility of synthetic maps by testing their ability to identify known functional abnormalities in the hippocampal formation and other regions affected in Alzheimer's disease [11].

Protocol for Accelerated MRI with DL Reconstruction

Objective: To achieve diagnostically acceptable 3D T1-weighted MRI scans in approximately one minute using deep learning reconstruction, enabling volumetry with significantly reduced motion artifacts [23].

Experimental Workflow:

Image Acquisition:

- Acquire 3D MPRAGE sequences with substantial acceleration factors (ranging from 2 to 16-fold) by reducing phase encodes, cutting scan times from approximately 5 minutes to about 1 minute.

- Simultaneously acquire standard (non-accelerated) 3D T1-weighted images for reference.

Deep Learning Processing:

Volumetric Analysis and Validation:

- Process both the DL-reconstructed accelerated images and standard reference images through FDA-cleared volumetric software (e.g., NeuroQuant) to obtain automated measurements of hippocampal volume, superior and inferior lateral ventricles, and hippocampal occupancy scores [22].

- Assess quantitative agreement between accelerated and standard protocols using linear regression, paired t-tests, and Bland-Altman analysis.

- Evaluate clinical utility by testing the ability of accelerated protocol measurements to differentiate between cognitively normal, mild cognitive impairment, and Alzheimer's disease subjects.

Deep learning-based brain volumetry using contrast-enhanced Magnetic Resonance Imaging (MRI) represents a transformative approach in neuroscience and pharmaceutical research. This technology enables the precise quantification of brain structures, providing critical biomarkers for diagnosing neurodegenerative diseases like Alzheimer's disease (AD), tracking aging-related changes, and evaluating therapeutic efficacy in drug development pipelines. The integration of convolutional neural networks (CNNs) and transformer-based architectures has demonstrated remarkable capabilities in extracting relevant features from complex neuroimaging data, facilitating early detection and intervention strategies for age-related cognitive decline [27]. These advancements allow researchers to move beyond traditional qualitative assessments to objective, reproducible measurements of brain integrity, establishing a powerful framework for understanding brain health across the lifespan and accelerating the development of neuroprotective treatments.

Quantitative Performance of Deep Learning Volumetry in Alzheimer's Disease

Deep learning models for brain MRI analysis have demonstrated increasingly sophisticated performance in classifying Alzheimer's disease and its prodromal stages. The following table summarizes key quantitative results from recent studies, highlighting the efficacy of various architectural approaches.

Table 1: Performance Metrics of Deep Learning Models in Alzheimer's Disease Classification from MRI

| Study Focus | Dataset | Model Architecture | Accuracy (%) | Precision (%) | Sensitivity (%) | F1-Score (%) |

|---|---|---|---|---|---|---|

| Alzheimer's Disease Classification [28] | OASIS-1 | 2D DenseNet-121 + Multi-head Transformer Encoder | 91.67 | 100.00 | 85.71 | 92.31 |

| Alzheimer's Disease Classification [28] | OASIS-2 | 3D DenseNet + Self-Attention Blocks | 97.33 | 97.33 | 97.33 | 98.51 |

| Early AD Detection [27] | Multi-modal Neuroimaging | Convolutional Neural Networks (CNNs) | >90 | - | - | - |

These results underscore several critical trends. First, hybrid architectures that combine CNNs with attention mechanisms (e.g., transformers) achieve superior performance, particularly on longitudinal datasets like OASIS-2, by effectively capturing both local features and global contextual relationships in volumetric brain data [28]. Second, the integration of multiple imaging modalities (MRI, PET, fMRI) further enhances diagnostic accuracy for early detection, surpassing the capabilities of single-modality approaches [27]. The high precision and sensitivity metrics indicate strong potential for deploying these models in clinical trial settings where accurate patient stratification and subtle change detection are paramount.

Experimental Protocols for Brain Volumetry in Preclinical and Clinical Research

Protocol 1: Human Alzheimer's Disease Classification using Hybrid Deep Learning

Application: Differentiating Alzheimer's disease stages from normal aging in human subjects.

Materials:

- Input Data: T1-weighted structural MRI scans from the OASIS-1 (cross-sectional) and OASIS-2 (longitudinal) datasets.

- Computational Resources: GPU-accelerated computing environment (e.g., NVIDIA Tesla series), Python with deep learning frameworks (PyTorch/TensorFlow).

Methodology:

- Data Preprocessing:

- Perform skull stripping, intensity normalization, and affine registration to standard template (e.g., MNI space).

- For 2D approaches: Reformat 3D volumes into sequential axial slices.

- For 3D approaches: Process full volumetric data with isotropic resampling.

Data Augmentation:

- Apply random rotations (±10°), horizontal flipping, and CutMix regularization to improve model generalization.

- Utilize label smoothing and dropout layers to prevent overfitting, particularly given class imbalance in AD datasets [28].

Model Architecture & Training:

- For Cross-sectional Data (OASIS-1): Implement a 2D DenseNet-121 backbone for slice-level feature extraction, followed by a lightweight multi-head transformer encoder to model global dependencies across slices [28].

- For Longitudinal Data (OASIS-2): Employ a 3D DenseNet structure augmented with self-attention blocks to capture spatio-temporal features across multiple time points [28].

- Loss Function: Use cross-entropy loss with class weighting for imbalance.

- Optimization: Train with Adam optimizer, initial learning rate of 1e-4, reduced by factor of 10 on validation loss plateau.

Validation:

- Perform k-fold cross-validation (typically k=5) to ensure robustness.

- Evaluate using standard metrics: accuracy, precision, sensitivity, specificity, F1-score, and area under ROC curve.

Protocol 2: Preclinical Drug Evaluation using Murine Brain Volumetry

Application: Quantifying therapeutic effects on brain atrophy in mouse models of neurodegeneration.

Materials:

- Animals: Transgenic mouse models (e.g., TDP-43 for ALS, cuprizone-induced demyelination models, EAE models for MS) [26].

- Imaging Hardware: 7 Tesla or higher MRI scanner with conventional radiofrequency coils.

- Image Acquisition: T2-weighted sequences with pixel volume of 78×78×250 μm³, acquisition time of 4.3 minutes [26].

Methodology:

- In Vivo Imaging:

- Anesthetize mice using isoflurane (2-3% induction, 1-2% maintenance in oxygen).

- Position animals in MRI-compatible stereotaxic bed with respiratory monitoring.

- Acquire high-resolution T2-weighted images with parameters optimized for SNR and contrast.

Deep Learning-Based Segmentation:

Volumetric Analysis:

- Calculate absolute volumes (mm³) of segmented structures for each animal.

- Normalize volumes to total intracranial volume to account for individual size differences.

- Compute atrophy rates for longitudinal studies by comparing volumes across multiple time points.

Histological Validation:

- Following final imaging, euthanize animals and perfuse transcardially with 4% paraformaldehyde.

- Process brains for immunohistochemistry (e.g., TDP-43 detection for ALS models) [26].

- Correlate MRI-based volumetry with histological markers of neurodegeneration.

Table 2: Essential Research Reagents and Materials for Deep Learning Brain Volumetry

| Category | Specific Item | Function/Application |

|---|---|---|

| Imaging Hardware | 7 Tesla MRI Scanner with Conventional RF Coil | High-resolution image acquisition for murine brain studies [26] |

| Computational Tools | Python with PyTorch/TensorFlow | Implementation and training of deep learning models (3D CNNs, U-Net, Transformers) [28] [21] |

| Animal Models | TDP-43 Transgenic Mice | Modeling amyotrophic lateral sclerosis (ALS) pathology [26] |

| Cuprizone-induced Demyelination Model | Modeling multiple sclerosis and demyelination disorders [26] | |

| C57BL/6J Mice | Wild-type control and disease model background strain [26] | |

| Data Resources | OASIS-1 & OASIS-2 Datasets | Human MRI data for Alzheimer's disease classification [28] |

| BraTS Challenge Datasets | Benchmark data for brain tumor segmentation [21] | |

| Validation Reagents | Anti-TDP43 Antibody (Abnova) | Immunohistochemical detection of TDP-43 protein in ALS models [26] |

Visualizing Deep Learning Workflows for Brain Volumetry

The application of deep learning to brain volumetry involves sophisticated computational pipelines that integrate image processing, feature extraction, and quantitative analysis. The following diagram illustrates the core workflow from data acquisition to research insights.

Deep Learning Brain Volumetry Pipeline

This workflow demonstrates the transformation of raw MRI data into actionable research insights through a multi-stage computational process. The integration of specialized deep learning architectures like U-Net and 3D DenseNet enables precise segmentation of brain structures, while subsequent volumetric analysis generates the quantitative biomarkers essential for studying aging, diagnosing Alzheimer's disease, and evaluating drug efficacy in clinical trials [28] [26] [21].

Technical Specifications for Visualization and Reporting

Diagram Implementation Standards

All computational workflows and experimental processes must be visualized using Graphviz DOT language with the following specifications:

- Color Palette: Strict adherence to the specified color palette (#4285F4, #EA4335, #FBBC05, #34A853, #FFFFFF, #F1F3F4, #202124, #5F6368) ensures visual consistency and brand alignment.

- Contrast Requirements: All node text (fontcolor) must explicitly contrast with node background (fillcolor) following WCAG 2.1 AA guidelines, requiring a minimum contrast ratio of 4.5:1 for normal text and 3:1 for large text [29].

- Dimensions: Maximum width of 760px ensures compatibility with standard publication formats while maintaining readability.

Data Presentation Guidelines

Effective communication of brain volumetry results requires careful data organization:

- Tables: Must be numbered consecutively, referenced in text before appearance, and include clear descriptive titles above the table body [30] [31].

- Figures: All graphs and charts should be labeled below the image with consecutive numbering and descriptive captions that enable interpretation without reference to main text [30].

- Quantitative Data: Report performance metrics with consistent precision (two decimal places for percentages) and include measures of variance where applicable.

The implementation of these technical standards ensures that research findings are communicated with maximum clarity, reproducibility, and impact, facilitating the adoption of deep learning volumetry methods across academic and pharmaceutical research environments.

Methodologies and Tools for DL-Based Segmentation and Analysis

Deep learning-based brain volumetry represents a significant advancement in neuroimaging research, offering unprecedented opportunities for quantifying structural changes in both healthy and diseased brains. Within this field, contrast-enhanced magnetic resonance imaging (CE-MRI) is a crucial clinical tool, particularly for assessing pathologies that disrupt the blood-brain barrier, such as tumors and inflammatory diseases. However, the presence of contrast agent alters tissue appearance, presenting a substantial challenge for automated segmentation tools traditionally trained on non-contrast images. This application note directly addresses this challenge by providing a comprehensive performance comparison and detailed experimental protocols for two prominent segmentation tools—SynthSeg+ and CAT12—specifically applied to CE-MRI data. The insights herein are designed to guide researchers, scientists, and drug development professionals in selecting and implementing appropriate segmentation methodologies for robust brain volumetry in clinical and research settings using CE-MRI.

Performance Comparison: SynthSeg+ vs. CAT12

Quantitative Reliability Assessment

A direct comparative study assessed the reliability of morphometric measurements from CE-MR scans compared to non-contrast MR (NC-MR) scans in 59 normal participants aged 21-73 years. The results demonstrate a clear performance differential between the two segmentation tools [15].

Table 1: Volumetric Segmentation Reliability (ICC Values) on CE-MRI vs. Non-Contrast MRI

| Brain Structure | SynthSeg+ | CAT12 |

|---|---|---|

| Cortical Gray Matter | >0.90 | Inconsistent |

| Cerebral White Matter | >0.90 | Inconsistent |

| Subcortical Structures | >0.90 | Inconsistent |

| Cerebrospinal Fluid (CSF) | Discrepancies noted | Inconsistent |

| Ventricular Volumes | Discrepancies noted | Inconsistent |

Table 2: Age Prediction Performance Using Segmentation Outputs

| Model Component | SynthSeg+ Performance | CAT12 Performance |

|---|---|---|

| CE-MR Scan Input | Comparable to NC-MR | Not specified |

| NC-MR Scan Input | Benchmark performance | Not specified |

| Predictive Utility | High for both scan types | Inconsistent |

The superior performance of SynthSeg+ stems from its underlying deep learning architecture, which employs a domain randomisation strategy during training. This approach involves randomizing contrast and resolution in synthetic training data generated by a generative model, enabling robust performance across diverse imaging domains without retraining [32]. In contrast, CAT12's more traditional processing pipeline demonstrates sensitivity to the altered contrast profiles in CE-MRI, leading to inconsistent results [15].

Technical Foundations and Mechanisms

Understanding the architectural differences between these tools clarifies their performance characteristics on CE-MRI:

SynthSeg+ Technical Foundation:

- Utilizes a convolutional neural network (CNN) architecture trained exclusively on unrealistic synthetic data

- Implements full contrast and resolution randomization during training

- Requires only segmentation maps for training (no real images needed)

- Achieves domain independence without retraining or fine-tuning

- Demonstrates robustness across MRI contrasts and even CT imaging [32] [33]

CAT12 Technical Foundation:

- Implements a comprehensive voxel-based morphometry (VBM) pipeline

- Relies on affine and non-linear spatial normalization

- Performs tissue classification into gray matter, white matter, and CSF

- Incorporates modulation and smoothing steps

- Shows high accuracy in volumetric analysis of non-contrast MRI [34] [35]

Experimental Protocols for CE-MRI Volumetry

Benchmarking Protocol: Tool Performance Validation

Objective: To quantitatively compare the reliability of SynthSeg+ and CAT12 for brain volumetry on paired CE-MRI and non-contrast MRI scans.

Materials and Specimens:

- 59 normal participants (aged 21-73 years) with paired CE-MRI and NC-MRI scans [15]

- T1-weighted sequences for both CE-MRI and NC-MRI

Imaging Parameters:

- Implementation of standardized T1-weighted sequences across scanners

- Consistent spatial resolution (recommended: 1mm isotropic)

- Controlled for scanner-specific variations through harmonization procedures

Experimental Workflow:

- Image Acquisition: Obtain paired CE-MRI and NC-MRI scans within the same session

- Data Preprocessing: Apply identical preprocessing steps (bias field correction, noise reduction)

- Segmentation Execution: Process all scans through both SynthSeg+ and CAT12 pipelines

- Quality Control: Implement tool-specific quality metrics (e.g., SynthSeg+ QC scores) [33]

- Volumetric Analysis: Extract brain structure volumes from segmentations

- Statistical Comparison: Calculate intraclass correlation coefficients (ICCs) between CE-MRI and NC-MRI derived volumes

- Age Prediction Modeling: Build and validate models using volumes from both scan types

Figure 1: Experimental workflow for benchmarking segmentation tool performance on CE-MRI.

Clinical Research Implementation Protocol

Objective: To implement robust brain volumetry in clinical research studies utilizing existing CE-MRI data.

Data Requirements:

- Clinical CE-MRI scans (typically T1-weighted post-contrast)

- Minimum quality standards: motion artifact minimization, whole-brain coverage

- Appropriate DICOM to NIfTI conversion if required

SynthSeg+ Specific Protocol:

- Installation: Implement through FreeSurfer distribution (version 7.4.1 or higher)

- Configuration: Enable robust mode for clinical data with potential pathologies

- Execution: Run with integrated quality control scoring

- Quality Assessment: Apply region-specific QC thresholds (range: 0.55-0.75)

- Data Extraction: Export volumetric data for 95 brain regions [33]

CAT12 Specific Protocol:

- Installation: Implement as SPM12 toolbox within MATLAB environment

- Configuration: Use default processing parameters for VBM analysis

- Segmentation: Process through standard tissue classification pipeline

- Modulation: Apply non-linear deformation for absolute tissue volumes

- Smoothing: Implement Gaussian kernel (recommended: 6mm FWHM for amygdala, larger for cerebellum) [35]

Validation Steps:

- Compare volumetric outcomes with clinical reads or expert segmentations

- Assess effect sizes for group differences in target populations

- Determine statistical power for planned analyses

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Software Solutions for CE-MRI Brain Volumetry

| Tool/Resource | Function | Application Context |

|---|---|---|

| SynthSeg+ | Domain-independent brain segmentation | Primary segmentation for CE-MRI; cross-modal studies |

| CAT12 (VBM Pipeline) | Voxel-based morphometry analysis | Non-contrast MRI studies; comparative benchmarks |

| FreeSurfer Suite | Comprehensive segmentation & analysis | Multi-modal integration; surface-based analysis |

| SPM12 | Statistical parametric mapping | Preprocessing & statistical analysis |

| BraTS Datasets | Benchmarking & validation | Algorithm development; performance testing [36] [21] |

| ADNI Database | Standardized reference data | Method validation; normative modeling |

Implementation Guidelines and Recommendations

Tool Selection Criteria

Based on the empirical evidence, the following decision framework is recommended for selecting segmentation tools in CE-MRI research contexts:

Select SynthSeg+ when:

- Analyzing clinically acquired CE-MRI where non-contrast scans are unavailable

- Conducting multi-site studies with scanner or protocol heterogeneity

- Pursuing cross-modal analysis (including CT-MRI integration)

- Studying populations with significant morphological variability (aging, disease)

- Prioritizing computational efficiency and pipeline standardization [15] [32]

Consider CAT12 when:

- Analyzing research-grade non-contrast MRI data

- Conducting voxel-based morphometry studies requiring SPM integration

- Studying populations with minimal pathological changes

- Utilizing established VBM processing pipelines with historical comparability [34] [35]

Quality Assurance Framework

Robust quality control is essential for reliable volumetry outcomes:

SynthSeg+ QC Implementation:

- Leverage integrated quality control scores for each segmentation

- Apply region-specific thresholds (0.55-0.75) rather than global cutoff

- Exclude regions failing QC while retaining usable structures

- Visually inspect segmentations, particularly near pathological regions [33]

CAT12 QC Implementation:

- Utilize sample homogeneity tools to identify outliers

- Check for registration failures using report functionality

- Verify segmentation accuracy through tissue probability maps

- Implement statistical checks for biological plausibility [35]

This application note establishes SynthSeg+ as the superior solution for brain volumetry on contrast-enhanced MRI, demonstrating high reliability (ICCs >0.90) for most structures compared to the inconsistent performance of CAT12 on such data. The domain randomization approach underlying SynthSeg+ provides inherent robustness to contrast variability, making it particularly suitable for leveraging clinically acquired CE-MRI datasets in neuroscience research and drug development. The provided experimental protocols enable immediate implementation of these methodologies, facilitating robust and reproducible brain volumetric analyses across diverse clinical and research contexts. As deep learning approaches continue to evolve, their capacity to transcend traditional contrast and resolution barriers will increasingly empower researchers to extract maximal scientific value from existing clinical imaging data.

Deep learning-based brain volumetry in contrast-enhanced MRI research is increasingly critical for understanding neurodegenerative diseases. Accurate measurement of cerebral blood volume (CBV) provides invaluable functional insights into brain metabolism and vascular health, serving as a key biomarker for conditions such as Alzheimer's disease and multiple sclerosis [26] [37]. Traditional CBV mapping requires gadolinium-based contrast agents (GBCAs), which pose clinical risks including tissue retention and nephrogenic systemic fibrosis [7]. These challenges have motivated research into non-contrast alternatives, culminating in the development of advanced AI architectures that synthesize CBV information from standard structural scans.

The evolution from Convolutional Neural Networks (CNNs) to hybrid models represents a significant architectural shift in medical image analysis. While CNNs excel at extracting local features through their inductive biases for spatial hierarchies, they struggle with capturing long-range dependencies due to their localized receptive fields [38]. Conversely, State Space Models (SSMs), particularly Mamba architectures, introduce selective scanning mechanisms that dynamically focus on relevant contextual information across entire volumetric datasets with linear computational complexity [38]. This paper explores the integration of these complementary approaches through 3D Mamba-CNN hybrid models for accurate, non-invasive CBV mapping, presenting application notes and experimental protocols to facilitate their adoption in neuroimaging research and drug development.

Quantitative Performance Analysis of CBV Mapping Architectures

Table 1: Performance comparison of CBV mapping and related brain analysis architectures

| Architecture | Task | Dataset | Key Metric | Performance |

|---|---|---|---|---|

| 3D Mamba-CNN Hybrid [7] | CBV Mapping from T1w MRI | Multi-site (Aging/AD patients) | Estimation Accuracy | Surpasses previous CBV estimation methods |

| VGG-based Multimodal (T1w + AICBV) [39] | Brain Age Estimation | 13 public datasets (n=2,851) | Mean Absolute Error | 3.95 years |

| VGG-based (T1w MRI only) [39] | Brain Age Estimation | Multiple public datasets | Mean Absolute Error | 4.06 years |

| PDSCNN-RRELM [40] | Brain Tumor Classification | Multi-class MRI | Accuracy | 99.22% |

| Swin Transformer [41] | Brain Tumor Classification | Various MRI datasets | Accuracy | Up to 99.9% |

| CNN-LSTM Hybrid [41] | Brain Tumor Classification | Various MRI datasets | Accuracy | >95% |

| Deep Learning Segmentation [41] | Brain Tumor Segmentation | Various MRI datasets | Dice Coefficient | 0.83-0.94 |

Table 2: Comparison of architectural advantages for medical image analysis

| Architecture | Long-Range Dependency Capture | Computational Complexity | Local Feature Extraction | Interpretability | Data Efficiency |

|---|---|---|---|---|---|

| 3D CNN | Limited | Moderate | Excellent | Moderate | Good |

| Transformer | Excellent | High (Quadratic) | Moderate | Challenging | Moderate |

| Mamba | Excellent | Low (Linear) | Moderate | Moderate [39] | Good |

| Mamba-CNN Hybrid | Excellent | Moderate | Excellent | Moderate [39] [42] | Good |

Experimental Protocols for Mamba-CNN Hybrid Model Development

Data Acquisition and Preprocessing Protocol

Imaging Data Requirements:

- Acquire T1-weighted MRI scans using standardized protocols (e.g., MP-RAGE sequences)

- For supervised approaches, include matched contrast-enhanced CBV maps as ground truth

- Ensure spatial resolution of at least 1.6×1.6×5.0mm³ for ULF-MRI [37] or 1.0×1.0×1.0mm³ for 3T systems [37]

- Collect multi-orientation acquisitions when possible to enhance reconstruction quality

Data Preprocessing Pipeline:

- Spatial Normalization: Register all images to standardized space (e.g., MNI-152 template) [39]

- Intensity Normalization: Apply modified min-max scaling by dividing each scan by the average of its top 1% intensity values [39]

- Patch Extraction: Divide 3D volumes into overlapping patches (e.g., 64×64×64 voxels) for manageable GPU memory usage

- Data Augmentation: Implement spatial transformations (rotation, flipping) and intensity variations to improve model generalization

- Stratified Splitting: Partition dataset into training, validation, and test sets (e.g., 8:1:1 ratio) based on age bins and project origin to ensure representative distribution [39]

Mamba-CNN Hybrid Architecture Implementation

Dual-Encoder Design:

- Implement a 3D CNN encoder with 5 sequential blocks for local feature extraction

- Each block should contain: 3D convolution, 3D batch normalization, ReLU activation, and 3D max pooling [39]

- Use increasing out channels (16, 32, 64, 128, 256) through the encoder hierarchy [39]

- Implement a parallel 3D Mamba encoder with VSS3D (Visual State Space 3D) blocks for global context capture [39] [7]

- Apply selective scanning along multiple trajectories (horizontal, vertical, depth) to capture spatial relationships [39]

Feature Fusion Mechanism:

- Incorporate bi-level synergistic integration blocks with modality attention and channel attention learning [42]

- Implement skip connections between encoder and decoder pathways to preserve spatial details

- Use adaptive multilevel feature fusion to dynamically balance contributions from both pathways [42]

Decoder Design:

- Employ symmetrical decoder with transposed convolutions for volumetric reconstruction

- Gradually reduce feature maps while increasing spatial dimensions

- Generate final CBV map with same dimensions as input T1w scan

Model Training Protocol

Optimization Configuration:

- Use Adam optimizer with learning rate of 1×10⁻⁴ [39]

- Employ Mean Absolute Error (MAE) loss for regression task

- Implement early stopping with patience of 10 epochs based on validation MAE [39]

- Train for maximum 100 epochs with batch size appropriate for available GPU memory (e.g., 3-8 samples) [39]

Hardware Requirements:

- Utilize NVIDIA RTX A6000 GPU or equivalent with ≥48GB VRAM

- Ensure adequate system RAM (≥64GB) for volumetric data loading

- Implement mixed-precision training where possible to reduce memory footprint

Validation Framework:

- Perform k-fold cross-validation (k=5-10) to assess robustness [40]

- Calculate correlation coefficients (R²) between predicted and ground truth CBV values

- Generate qualitative results through gradient-based class activation maps (Grad-CAM) for model interpretability [39]

Workflow Visualization

CBV Mapping Workflow - The Mamba-CNN hybrid model processes T1-weighted MRI through parallel encoders followed by feature fusion.

Hybrid Architecture Details - The model combines local feature extraction (CNN) with global context modeling (Mamba) through attention-based fusion.

Table 3: Essential research reagents and computational resources for CBV mapping

| Resource Category | Specific Resource | Application in CBV Research | Key Characteristics |

|---|---|---|---|

| Public Datasets | ADNI (Alzheimer's Disease Neuroimaging Initiative) [39] | Model training/validation for neurodegenerative applications | Multi-site, longitudinal, elderly focus |

| BraTS (Brain Tumor Segmentation) [21] [42] | Method validation for tumor-related CBV alterations | Multi-institutional, tumor annotations | |

| OASIS (Open Access Series of Imaging Studies) [39] | Normal aging reference and model generalizability testing | Lifespan coverage, cognitive data | |

| Software Libraries | PyTorch [39] | Primary deep learning framework for model implementation | GPU acceleration, autograd system |

| MONAI (Medical Open Network for AI) | Domain-specific medical imaging tools | Preprocessing, 3D network architectures | |

| SynthSR [37] | Resolution enhancement for ULF-MRI compatibility | CNN-based super-resolution | |

| Hardware Requirements | NVIDIA RTX A6000 GPU [39] | Model training and inference | 48GB+ VRAM for 3D volumes |

| High-performance Computing Cluster | Large-scale hyperparameter optimization | Multi-node parallel processing | |

| Evaluation Tools | Gradient-based Class Activation Maps (Grad-CAM) [39] | Model interpretability and biological validation | Visualizes predictive regions |

| Dice Similarity Coefficient [21] | Segmentation quality assessment | Measures spatial overlap | |

| Freesurfer [37] | Automated brain volumetry and anatomical labeling | Standardized neuroimaging pipeline |

The integration of 3D Mamba and CNN architectures represents a transformative advancement in cerebral blood volume mapping from structural MRI. These hybrid models successfully address fundamental limitations of previous approaches by combining the superior local feature extraction of CNNs with the global contextual understanding and computational efficiency of Mamba models. The application notes and protocols outlined in this work provide researchers with practical guidance for implementing these architectures in brain volumetry research, particularly for contrast-enhanced MRI studies where gadolinium administration presents clinical limitations. As these methods continue to mature, they hold significant promise for enhancing the safety, accessibility, and precision of functional neuroimaging in both clinical trials and routine patient care for neurodegenerative diseases. Future work should focus on validating these approaches across diverse patient populations and expanding their applications to additional functional imaging biomarkers beyond CBV.

Deep learning-based brain volumetry in contrast-enhanced MRI research requires large, annotated datasets to train robust and generalizable models. This requirement presents a significant challenge for rare pathologies, where patient data is inherently scarce. The limited availability of such data can lead to model overfitting, reduced statistical power, and ultimately, hindered progress in diagnosis and drug development [43] [44]. Synthetic data generation, particularly using diffusion models, has emerged as a powerful strategy to overcome these limitations. These models can generate high-fidelity, anatomically plausible neuroimages, enabling researchers to augment existing datasets and create entirely synthetic cohorts for rare diseases [43] [45]. This document provides application notes and detailed experimental protocols for employing diffusion models to generate synthetic brain MRI data for rare pathologies, framed within a deep learning brain volumetry research pipeline.

Diffusion Models for Medical Imaging: A Primer

Diffusion Models, specifically Denoising Diffusion Probabilistic Models (DDPMs), are a class of generative models that learn to create data by progressively denoising a random variable. The process involves a forward noising process, where Gaussian noise is incrementally added to a real image until it becomes pure noise, and a reverse denoising process, where a neural network is trained to reverse this noising, thereby learning to generate data from noise [43] [46]. Compared to other generative models like Generative Adversarial Networks (GANs), DDPMs offer superior training stability, a lower risk of mode collapse, and have demonstrated a remarkable ability to generate high-quality, diverse medical images [43] [45] [46]. Latent Diffusion Models (LDMs) represent a significant advancement by performing the diffusion process in a compressed latent space of an autoencoder, drastically reducing computational costs without sacrificing image quality [46].

Application Notes for Rare Pathologies

The application of diffusion models to rare pathologies involves several key considerations to ensure the generated data is both realistic and useful for downstream tasks like brain volumetry.

- Conditional Generation: To be effective, the generation process must be conditioned on specific, relevant parameters. This allows for the targeted creation of synthetic data representing a specific rare pathology, MRI contrast (e.g., T1-weighted, FLAIR, T1ce), and even demographic information [47] [46]. Conditioning can be achieved using techniques such as class labels, text prompts describing imaging metadata, or input images from other modalities [47].

- Preservation of Anatomical Fidelity: For brain volumetry, it is critical that synthetic images maintain globally and locally consistent brain anatomy. Models must be trained on datasets of healthy subjects or a mix of healthy and pathological scans to learn the underlying anatomical structure, ensuring that generated pathological features are embedded in a plausible anatomical context [43].

- Addressing Data Imbalance: In a dataset containing multiple pathologies, rare conditions are inherently underrepresented. Diffusion models can be conditioned on these rare classes to generate a sufficient number of samples, thereby balancing the dataset and improving the performance of downstream segmentation or classification models [46].

- Privacy Preservation: Since synthetic data generated by diffusion models is not a direct copy of any single patient's data, it offers a pathway for creating privacy-compliant datasets. This facilitates safer data sharing between institutions, which is particularly valuable for multi-center studies on rare diseases [43] [48].

Experimental Protocols

Below are detailed protocols for two common scenarios in synthetic data generation for rare pathologies.

Protocol 1: Training a Conditional DDPM for Pathological MRI Synthesis

This protocol outlines the process for training a diffusion model to generate 3D brain MRIs conditioned on pathology and modality.

Objective: To train a Denoising Diffusion Probabilistic Model (DDPM) capable of generating synthetic 3D T1-weighted brain MRIs with specific rare pathologies (e.g., Glioblastoma, rare dementias) for data augmentation.

Materials & Methods:

- Dataset:

- A multi-center dataset of 3D T1-weighted brain MRIs.

- Inclusion: Scans from healthy subjects and patients with the target rare pathology.

- Preprocessing: Standard preprocessing steps including N4 bias field correction, skull-stripping, affine spatial normalization to a standard template (e.g., MNI space), and intensity normalization [43].

- Model Architecture:

- A 3D U-Net with residual blocks is recommended as the backbone for the denoising network [43].

- Conditioning Mechanism: Integrate conditioning information (pathology label, modality) using adaptive group normalization (AdaGN) layers within the U-Net, where the conditioning vector modulates the activation statistics at each group normalization layer [46].

- Training Configuration:

- Optimizer: Adam.

- Learning Rate: 1e-4.

- Training Steps: 400,000 (or 400 epochs, depending on dataset size).

- Noise Schedule: Linear noise schedule, managed by a scheduler (e.g., DDPMScheduler from MONAI) [43].

- Objective Function: Simplified mean-squared error loss between the predicted noise and the true noise added at each timestep [43].

Procedure:

- Data Preparation: Curate and preprocess the dataset. Annotate each scan with its condition (pathology label and modality).

- Model Initialization: Initialize the 3D U-Net weights.

- Training Loop: For each training iteration:

a. Sample a batch of real images

x₀and their corresponding condition labelsc. b. Sample a random timesteptuniformly from[1, T]. c. Sample random noiseεfrom a standard Gaussian distribution. d. Create the noisy imagexₜusing the forward process:xₜ = √ᾱₜ * x₀ + √(1-ᾱₜ) * ε. e. Pass the noisy imagexₜ, timestept, and conditioncto the U-Net to predict the noiseε_θ(xₜ, t, c). f. Compute the lossL = ||ε - ε_θ(xₜ, t, c)||². g. Update the model parameters via backpropagation. - Validation: Periodically generate samples from the model during training using the reverse process, conditioned on held-out labels, for qualitative assessment.

Protocol 2: Validating Synthetic Data for Downstream Volumetry Tasks

This protocol describes how to validate the utility of generated synthetic data by using it to augment training sets for a brain volumetry model.

Objective: To evaluate whether synthetic MRIs of a rare pathology generated by a trained DDPM can improve the performance of a U-Net-based brain lesion segmentation model.

Materials & Methods:

- Datasets:

- Real Data (Small): A limited dataset of real T1-weighted MRIs with rare pathology and corresponding expert-annotated lesion segmentation masks.

- Synthetic Data: A larger dataset of synthetic T1-weighted MRIs generated by the DDPM from Protocol 1, conditioned on the same rare pathology. Note: These synthetic images lack native segmentation masks.

- Segmentation Model: A standard 3D U-Net architecture [45] [21].

- Pseudo-Mask Generation: Use a pre-existing, off-the-shelf lesion segmentation tool (trained on a different, larger dataset of common pathologies) to infer approximate "pseudo-masks" for the synthetic images [45].

Procedure:

- Establish Baseline: Train the 3D U-Net segmentation model solely on the small real dataset. Evaluate its performance on a held-out real test set using the Dice Similarity Coefficient (DSC).

- Augmented Training: Create an augmented training set by combining the real data with the synthetic data and their corresponding pseudo-masks.

- Model Training: Retrain the 3D U-Net from scratch on the augmented dataset.

- Performance Comparison: Evaluate the retrained model on the same held-out real test set.

- Analysis: Compare the DSC and other relevant metrics (e.g., Hausdorff Distance) from the baseline model and the augmented model. A significant improvement in the augmented model's performance indicates the synthetic data's utility [45].

The following workflow diagram illustrates the validation protocol.

Quantitative Performance Data

The table below summarizes key quantitative findings from recent studies on using synthetic data for medical imaging tasks, which underpin the protocols described above.

Table 1: Quantitative Performance of Models Using Diffusion-Based Synthetic Data

| Study Focus | Model Architecture | Key Metric | Reported Result | Implication for Rare Pathologies |

|---|---|---|---|---|

| Brain Lesion Segmentation [45] | DDPM (ControlNet & Custom) for augmentation of U-Net | Dice Score (DSC) | <1.5% performance loss vs. real data; outperformed GANs | Synthetic data is a high-quality substitute when real data is limited. |

| 3D Brain MRI Generation [43] | DDPM with 3D U-Net | Maximum Mean Discrepancy (MMD) | Confirmed similarity between real and generated data distributions | Generated scans are anatomically coherent and realistic. |

| Universal MRI Synthesis [47] | Text-guided Diffusion Model (TUMSyn) | Radiologist Assessment & FID | High-fidelity images meeting diverse clinical needs | Enables generation of unacquirable MRI sequences for rare diseases. |

| Conditional MRI Generation [46] | Latent Diffusion Model (LDM) | Fréchet Inception Distance (FID) | Distribution of generated images similar to real ones | Effective for balancing underrepresented classes in datasets. |

The Scientist's Toolkit

This section lists essential software tools and resources for implementing the described protocols.